Something uncomfortable is happening to a lot of marketing teams right now. Their brand ranks well in Google, content is shipping on schedule, dashboards look fine, and yet competitors are quietly racking up mentions in ChatGPT, Perplexity, and Google's AI Overviews. If your inbound pipeline has softened despite stable rankings, LLM visibility is usually part of the explanation. The difference between traffic metrics and visibility metrics has never carried more weight.

If you're trying to figure out how to benchmark LLM visibility for competitors, you need a process you can rerun without turning it into a quarterly science project. You'll build a buyer-intent query set, score outputs with a rubric, spot patterns across models, then turn the gaps into content and positioning fixes. No fluff. Just the method and the mistakes that waste weeks.

Why Competitor LLM Benchmarking Is Different From Traditional Competitive Analysis

Competitive analysis used to feel like a solved problem. Plug a domain into a tool, pull keywords, backlinks, traffic estimates. Done.

LLM visibility is messier, and it shows up earlier in the buyer journey. Traditional competitive analysis tells you where a competitor ranks on page one. LLM visibility tells you whether an AI system recommends them by name when a potential customer asks a buying question. Those are different signals, and mixing them leads to bad prioritization. The actions you'd take to move from position 6 to position 3 are not the same actions that get your brand into an AI-generated recommendation.

A brand sitting at #4 for a keyword can still be the first name ChatGPT surfaces when someone asks "what's the best tool for X." The reverse is equally common: a brand that dominates organic search can be completely absent from AI-generated recommendations. I've seen both happen in the same competitive set within the same quarter. That disconnect is exactly why benchmarking LLM visibility for competitors needs its own framework, separate from your SEO dashboards.

Step 1: Build Your Query Set

Start with 20 to 30 prompts that mirror how real buyers talk, not how marketers write. "Best AI marketing tools" and "what should I use to automate my content pipeline" are not the same prompt. LLMs respond differently to each, and brand visibility shifts accordingly.

Because most teams skip this step, the data ends up unreliable. Sanity-check your competitor set before you lock the prompts. LLMs surface brands you'd never treat as direct rivals in SEO, including niche tools that win because their content is clearer, more structured, or more frequently cited. If you've ever run a few prompts and thought, "Wait, why are they in this list?", that's the point.

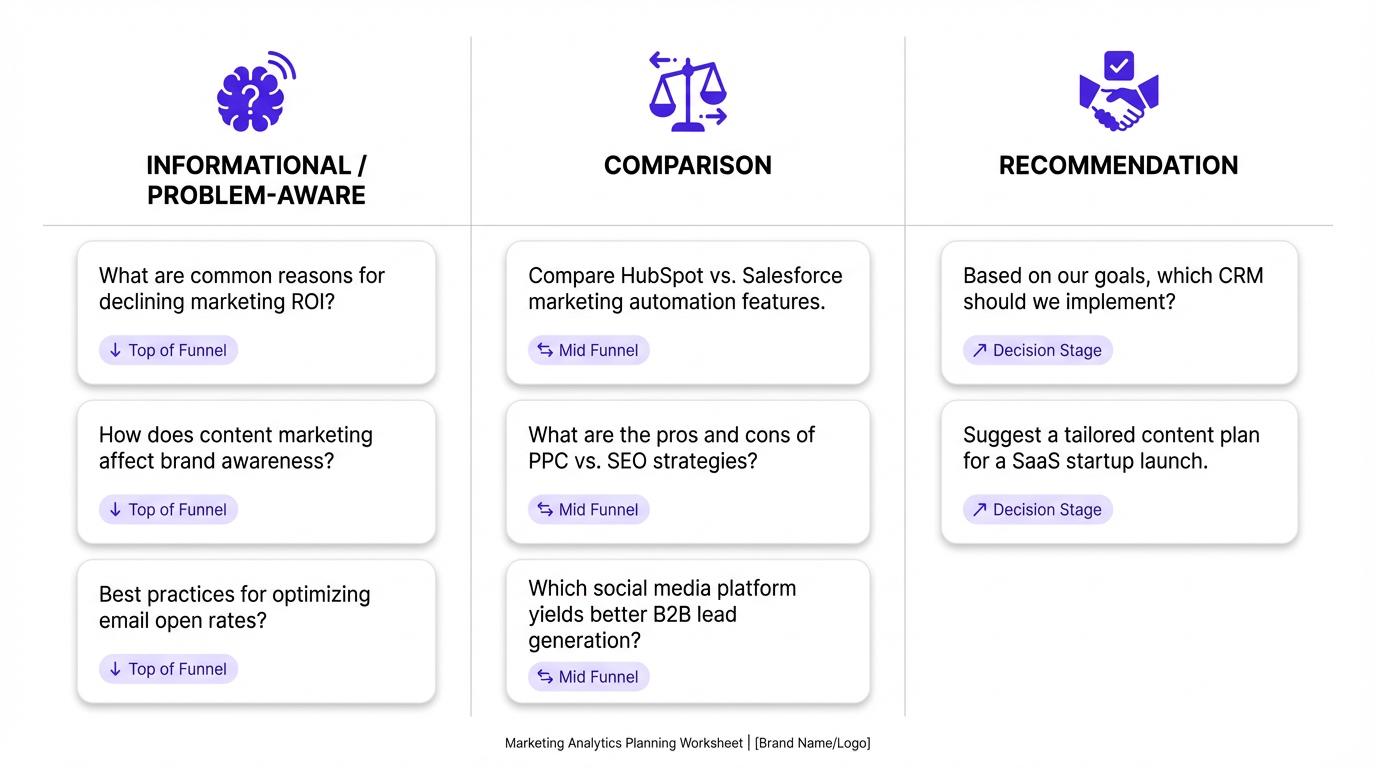

Use three intent buckets so you can see where competitors win: top-of-funnel education, head-to-head evaluation, and "tell me what to buy" moments.

- Recommendation queries: "What are the best tools for [use case]?" or "Which platform should I use for [job to be done]?"

- Comparison queries: "How does [your brand] compare to [competitor]?" or "What's the difference between X and Y?"

- Problem-aware queries: "How do I solve [specific pain point]?" where your category is the implied solution

Keep the prompts conversational. Nobody types "Please enumerate the top enterprise marketing automation platforms ranked by feature completeness" into a chatbot. They type something like "What's a good marketing tool for a small team?" If you test with stiff, keyword-stuffed prompts, you'll get stiff, keyword-stuffed answers. Garbage in, garbage out.

Run each query across at least two LLMs. ChatGPT and Perplexity are the most relevant for B2B buying contexts right now. Google's AI Overviews matters if your audience skews search-first. Testing a single platform and calling it done will give you a partial picture, and you'll miss platform-specific patterns that reveal where competitors have focused their content efforts.

Step 2: Capture and Score the Outputs

Raw outputs are useless without a consistent scoring rubric. Build it before you start collecting data. The most common mistake I see is pulling 50 responses and then trying to decide what to measure afterward. You end up with a pile of qualitative notes and no way to track change over time.

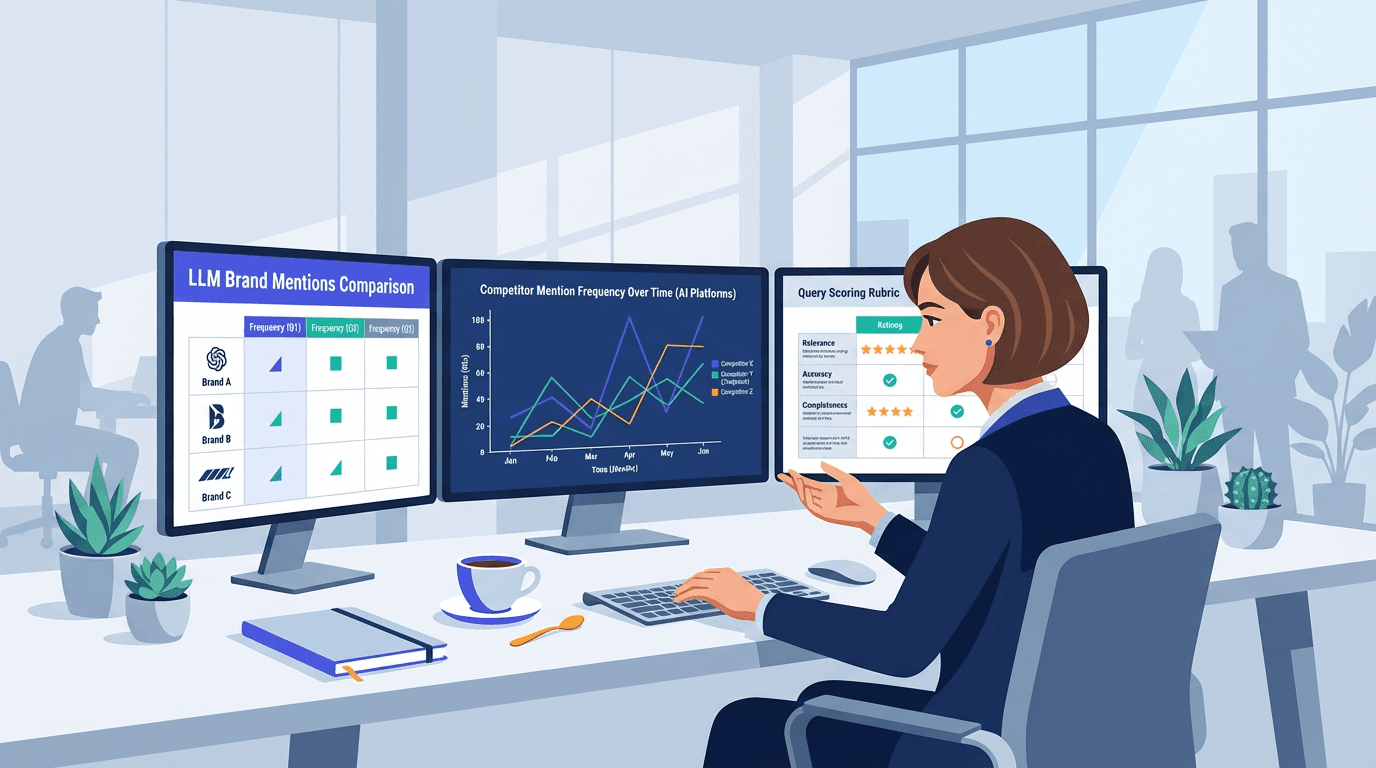

| Visibility Level | What It Looks Like | Score |

|---|---|---|

| Not mentioned | Brand absent from response entirely | 0 |

| Passive mention | Brand named but not described or recommended | 1 |

| Active mention | Brand described with context or use case | 2 |

| Primary recommendation | Brand listed first or called out as top choice | 3 |

| Sole recommendation | Brand is the only option mentioned | 4 |

| Apply this rubric per query, per LLM, per competitor. Aggregate scores reveal visibility gaps fast. |

Track each competitor separately, with columns for query, LLM platform, brand mentioned, visibility score, and sentiment framing (positive, neutral, or qualified with caveats). That last column matters more than most people expect. A mention paired with "but it's expensive" or "limited integrations" is not the same as a clean recommendation.

One contrarian take: don't obsess over raw mention counts in the early runs. Position in the answer and the language around the mention usually predicts real-world impact better than a simple tally. Being the first recommendation in 6 out of 20 prompts beats being the fifth name in 14 out of 20. Frequency without context is a vanity metric in this space.

Step 3: Identify Visibility Patterns Across Your Competitive Set

After two to three weeks of data, patterns emerge quickly. Some competitors dominate on ChatGPT but barely register on Perplexity. Others get mentioned constantly for one use case and go invisible for adjacent ones. Those gaps are where your opportunity lives, and they're often more actionable than anything you'd find in a traditional keyword gap analysis.

Pay close attention to which competitors get cited with sources. Perplexity pulls from indexed content, so a citation link tells you something about their content strategy, not just their brand awareness. A competitor getting cited because of a detailed integration guide or a well-structured comparison page is solving a different problem than one who gets mentioned because they've been around for a decade and the model has absorbed their name through sheer volume of web presence.

Sentiment drift across query types is another signal people miss. A competitor scoring well on recommendation queries but poorly on comparison queries usually has strong positioning but weak differentiation messaging. That's an opening you can exploit with targeted content.

If you've tried this and gotten inconsistent results, you're not alone. Small prompt changes can swing the output. That's why you're looking for patterns across a set, not treating any single answer like gospel. Consistency across 20+ queries is the signal. Any individual response is just noise.

Step 4: Set Baselines and Run Benchmarks on a Cadence

A one-time snapshot is interesting. A monthly benchmark is actually useful. LLM outputs shift as models update, as new content gets indexed, and as brand authority evolves. What holds in January may look completely different by April, especially in competitive categories where multiple brands are actively publishing.

Set your baseline in the first month, then rerun the same query set every four weeks. Keep the prompts identical between runs. If you swap queries mid-cycle, you lose the ability to distinguish real movement from prompt variation. Consistency in your inputs is what makes the output data trustworthy over time.

For teams that want to automate this, Vizup's AI-powered organic marketing platform tracks LLM brand mentions and competitor visibility at scale, so you're not manually running 30 prompts across three platforms every month. Doing it by hand at least once is still worth the time. You'll catch nuances in how LLMs frame recommendations that automated scoring sometimes flattens.

What to Do With the Benchmark Data

Benchmarking without action is expensive data collection. Once you know where competitors outperform you in AI-generated answers, the fix is usually content-based. But not in the lazy "publish more" way.

Start by diagnosing why the gap exists. From what we've seen across audits, the gap usually falls into one of three buckets: you're under-explained for a use case (a content coverage problem), you're mis-framed (a positioning and differentiation problem), or you're getting dragged by repeated caveats in AI responses (a sentiment and objections problem). Each one has a different fix, and conflating them wastes time.

Here's the part most guides get wrong: LLMs don't reward generic category pages. They reward clarity. If a competitor consistently gets recommended for "AI sales automation" and you don't, the answer is rarely "run more ads." It's "publish better, more specific content on that topic and get it cited." That means use-case pages, integration pages, docs that answer real objections, and a few genuinely helpful comparisons that don't read like a hit piece.

If you want a faster way to turn benchmark gaps into briefs your team will actually ship, the competitor analysis prompt library is a practical starting point.

One more opinionated note: chasing "AI optimization tricks" is overrated. If your product positioning is fuzzy and your best answers live in sales calls instead of on the site, you'll keep losing the narrative in AI responses. Fix the basics first. Make sure your site clearly articulates what you do, who it's for, and why it's different. Then measure again. If you want a cleaner way to track progress without turning this into a monthly spreadsheet ritual, Vizup is built for exactly this workflow.

Frequently Asked Questions

How often should I re-run LLM visibility benchmarks for competitors?

Monthly is the right cadence for most teams. LLM outputs shift with model updates and content changes, so quarterly benchmarking often misses meaningful movement. If you're in a fast-moving competitive category, bi-weekly runs on your top 10 queries can catch early signals before they become entrenched patterns.

Which LLMs should I include in my competitor benchmarking?

ChatGPT and Perplexity are the highest priority for B2B marketing contexts. Google's AI Overviews matters if your audience is search-first. Claude is worth including if your competitors are in the developer or technical tools space. Start with clean data from two platforms before expanding to more, so you can establish reliable baselines without overwhelming your process.

Can I benchmark LLM visibility without a paid tool?

Yes, manually. Build a spreadsheet, run your query set across platforms, and score outputs using a consistent rubric. For a 25-query set across two platforms, expect roughly 3 to 4 hours per benchmark run. That's manageable monthly for small teams. At scale, a platform like Vizup automates collection and scoring so you're not doing it by hand every cycle.

What's the difference between LLM visibility and traditional SEO rankings?

SEO rankings show where a page appears in a list of search results. LLM visibility shows whether an AI system names your brand in a conversational recommendation. A brand can rank #1 organically and still be absent from AI-generated answers, and vice versa. They measure different things and require different strategies to improve, which is why benchmarking both separately gives you a more complete competitive picture.

How do I know if a competitor's LLM visibility is driven by content or brand age?

Check whether their mentions come with source citations, which is common in Perplexity. Citations point to content-driven visibility, which is replicable. Uncited mentions in ChatGPT often reflect training data weight, which correlates with brand age and overall web presence volume. Content-driven visibility is the one you can realistically compete with in the short term through targeted publishing and better site structure.

What metrics should I track for competitor LLM visibility benchmarking?

Track visibility score (0 to 4), position in the answer (first, middle, last), sentiment framing (positive, neutral, qualified), and whether the model cites a source (especially in Perplexity). Those four fields are enough to spot real movement without overcomplicating your spreadsheet.

How do I turn LLM visibility benchmarks into an action plan?

Pick the 5 to 10 prompts where competitors are primary recommendations and you're absent or qualified negatively. Then map each gap to one fix: a missing use-case page, a weak comparison page, an integration guide that doesn't exist yet, or an objections page that answers the caveat the model keeps repeating.