Microsoft Clarity AI Citations is now open to everyone, closing out the limited rollout that left most teams waiting. If Clarity is already installed, you can start measuring how your content gets cited inside AI-generated answers today, no waitlist, no enterprise plan. For most marketers, this is the first time AI citation tracking is available for free at scale, using real grounding signals instead of simulated prompt tests.

AI answers are turning into both a traffic channel and a brand liability. When Copilot or ChatGPT cites a competitor instead of your page, you lose the narrative before anyone even lands on your site. This walkthrough focuses on setup, a tour of all six dashboard views, and a weekly operating loop that turns citation data into concrete content fixes. The sequence is simple: enable it, read the views, close the gaps, then track the impact.

What Microsoft Clarity AI Citations Actually Measures (and What It Doesn't)

AI citations are not rankings. In Clarity, a citation is a grounding or attribution event, the moment an AI system uses your content to assemble an answer, with or without a click. Microsoft Clarity frames this as influence that happens "upstream" of visits, capturing when AI systems rely on your site even if the user never clicks through. That framing changes how you report the metric and what you do with it.

Scope matters just as much as definitions. Clarity’s AI Citations reflect Microsoft’s grounding ecosystem: Copilot experiences and ChatGPT-via-Bing. It doesn’t include Google AI Overviews, Perplexity, or Claude. If you’re responsible for cross-engine AI search visibility, treat Clarity as the Microsoft layer and use separate monitoring for the rest. Otherwise, it’s easy to mistake a strong citation rate for broad market coverage when it’s really one ecosystem. For a wider view of AI search visibility across engines, you’ll need additional tooling.

Before You Start: Prerequisites and Tracking Hygiene

Confirm these three things before enabling the feature:

- Clarity is installed and verified on the correct property. Make sure the tracking tag fires on the domain (or subdomain) you actually want to measure, get this wrong and you’ll end up with zero citation data for the site you care about.

- UTM conventions and referrer handling are consistent. AI referral traffic in Clarity can disappear if your analytics stack strips or rewrites referrers. Check tag manager rules before you set a baseline.

- Ownership is assigned. The dashboard will surface action items quickly. Decide up front whether SEO, content, or marketing ops owns the weekly review, unclear ownership is how this dies in a backlog.

Enable Microsoft Clarity AI Citations: Setup Walkthrough

Step 1: Find the AI Citations Entry Point in Clarity

Sign into Microsoft Clarity and open the right project. In the left nav, look for an "AI" or "AI Visibility" section, the label varies depending on rollout status, but it lives near Heatmaps and Recordings. From there, open the AI Citations tab. For your first pass, confirm the project, set the range to the last 28 days, and remove any filters. A clean baseline is more useful than a pre-filtered view this early.

Step 2: Confirm Data Collection and Avoid False Negatives

If you’re staring at an empty dashboard, the usual culprits are low query volume in your niche, a newly installed tag that hasn’t had time to accumulate signal, or a property mismatch. Sanity-check by opening Clarity’s traffic acquisition view and looking at the AI Platform channel group, if you see sessions attributed to Copilot or Bing AI, the tag is firing and citations should appear as query volume builds. Give new implementations 7 to 14 days before you call it a miss.

Tip: Screenshot your first-week baseline right after you enable the feature. Week-one citation rate, query volume, and top cited pages become the benchmark for every comparison that follows. Drop them into a shared "AI visibility" doc so stakeholders can see the starting line.

The Clarity AI Visibility Suite: How the Pieces Connect

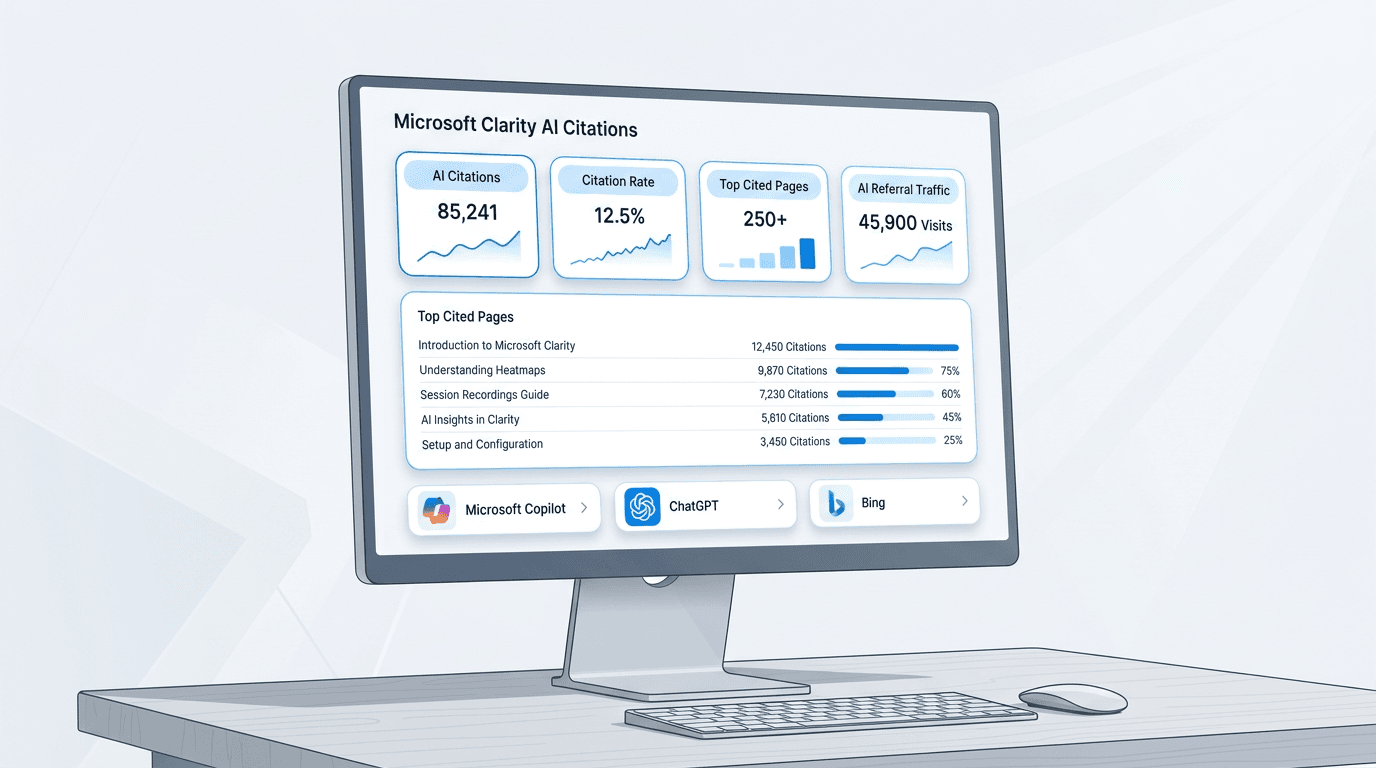

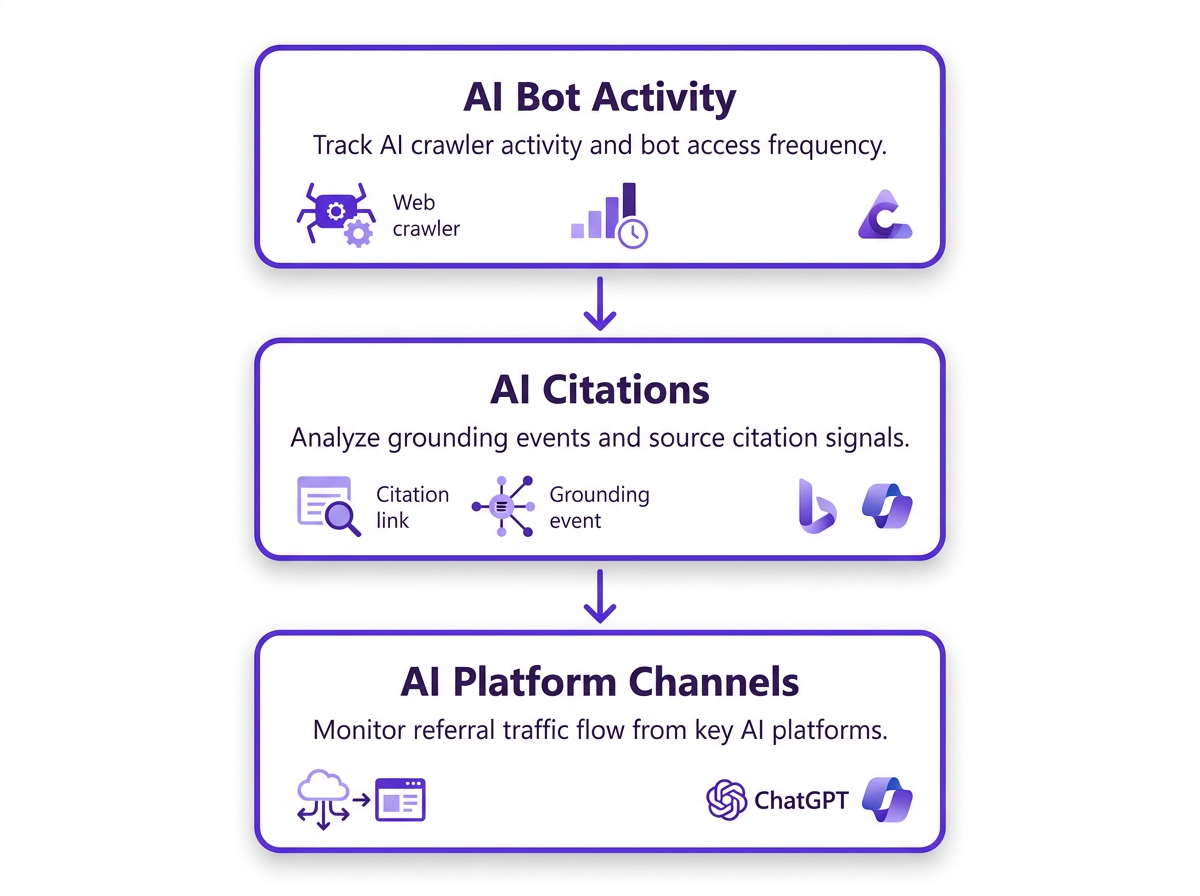

AI Citations sits inside a broader Clarity AI visibility suite. It pulls together three signal types: AI Bot Activity (which crawlers show up and how often), AI Citations (grounding events inside AI answers), and AI Platform Channels (the traffic that actually comes back from AI surfaces). Swipe Insight’s 2026 launch coverage describes this as an end-to-end view that combines Bing Webmaster Tools and Clarity so you can see both citation behavior and the sessions that follow. The bigger shift is learning why visibility metrics matter more than raw traffic counts, otherwise the suite turns into another dashboard you glance at and ignore.

Dashboard Tour: The 6 Metric Views You'll Use Every Week

Clarity’s GEO analytics dashboard breaks AI citation data into six views, each built for a different diagnostic job. A practical weekly loop looks like this: spot changes, isolate the pages involved, check where citations are showing up, then ship fixes. Here’s how to read each view without getting lost in the noise.

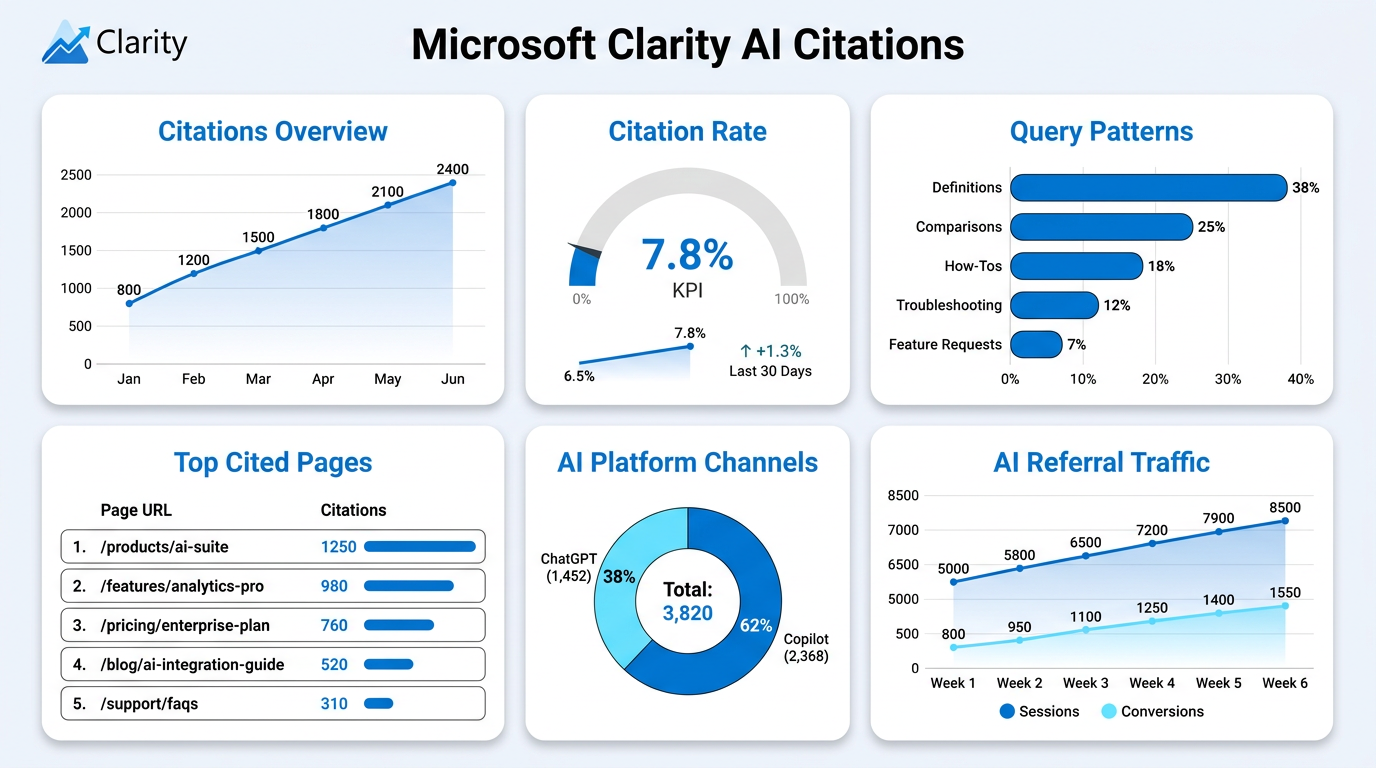

Views 1 and 2: Citations Overview and Citation Rate Tracking

Citations Overview charts total citation events over time. Separate sustained movement from one-off spikes, spikes often line up with PR moments, launches, or a trending query your content happens to answer. Citation rate tracking is the metric you can actually operate on: the share of relevant queries where your domain gets cited. If citation rate climbs, your content is becoming more "groundable." If query volume rises but citation rate stays flat, you’re usually looking at a coverage gap or a page-structure problem.

Views 3 and 4: Top Cited Pages and Query/Topic Patterns

The page breakdown is where winners and gaps become obvious. High-citation pages tend to share a few traits: they answer early, define terms clearly, compare options with structure, and back claims with specific stats that are easy to quote. Query and topic patterns show the kinds of questions your site is getting pulled into. Definitions, "best X" comparisons, troubleshooting, and how-tos usually ground better than opinion posts or thin category pages. Use this view to decide what formats to scale across the site, and what to stop expecting citations from.

Views 5 and 6: AI Platform Channels and AI Referral Traffic

AI Platform Channels shows where citations appear across Copilot surfaces and ChatGPT-via-Bing. Read it as distribution, not intent: it tells you where you’re showing up, not why the question was asked. AI referral traffic connects citations to outcomes, sessions, engagement, and conversions tied to AI-referred visits. After you ship content updates, watch this view alongside citation rate to confirm improvements translate into visits. Use a 28-day window, and annotate meaningful edits so you can tie cause to effect later.

Metric Definition Table: Save This as Your Internal Reference

| Metric | What It Means | What Good Looks Like | What to Do If It's Low |

|---|---|---|---|

| Queries Cited | Count of query instances where your domain is cited in an AI answer | Early: any non-zero number. Mature: steady week-over-week growth | Expand topic coverage; publish answer-first content for high-volume queries |

| Query Volume | Total query volume for topics relevant to your domain | A proxy for niche size; benchmark within your category, not against the internet | Extend into adjacent topics; strengthen entity associations in Bing Webmaster Tools |

| Citation Rate | Share of relevant queries where you’re cited, the core grounding efficiency metric | Early: 5–15%. Mature: 20%+ on core topics | Rework pages with answer-first sections, clear definitions, and structured comparisons |

| Top Cited Pages | Pages most frequently used as grounding sources in AI answers | A healthy mix (definitions, how-tos, comparisons) instead of one outlier | Compare low performers to top pages and fix structural gaps |

| AI Platform Channels | Where citations appear across Copilot surfaces and ChatGPT-via-Bing | Coverage across multiple surfaces suggests broad grounding eligibility | Verify indexing in Bing Webmaster Tools; confirm pages are crawlable and well-structured |

| AI Referral Traffic | Sessions arriving from AI platform surfaces | Growth plus real engagement (not just drive-by clicks) | Make sure cited pages match intent and include clear CTAs |

| Reference this table in stakeholder reviews to frame each metric with context, not just numbers. |

Bing Grounding Analytics vs. Search Indexing: Why They're Not the Same

Bing indexing and being "groundable" aren’t interchangeable. Indexing means Bing crawled and stored the page. Grounding eligibility is stricter: the content needs to be structured, authoritative, and unambiguous enough for an LLM to treat it as a reliable source inside an answer. Clarity’s Bing grounding analytics shows which pages are actually being used, usually a much smaller slice than your total indexed footprint. Bing Webmaster Tools tells you whether pages are indexed; Clarity shows the grounding outcome. If you want more detail on how those signals relate, see our guide to AI marketing tools. The operational takeaway is blunt: you can be fully indexed and still sit near zero citations if your pages lack structural clarity, entity consistency, or credible sourcing. Improving llm citations is content work, not just technical SEO.

What to Do Next: Turn AI Citations Into a Weekly Optimization Loop

Diagnose: Find Citation Gaps and Near-Miss Pages

Bucket pages into four cohorts: high traffic/low citations, high citations/low traffic, rising citations, and falling citations. Start with high traffic/low citations, those pages already have demand, but they’re not getting picked as grounding sources. That’s usually the fastest path to ROI. Look for intent-to-structure mismatches. A page titled "Our Approach to X" rarely grounds as well as "What Is X: Definition, Examples, and How It Works." Copilot citation monitoring rewards pages that get to the point.

Fix: Content Changes That Improve Grounding Eligibility

The fixes that tend to move the needle are structural, not cosmetic: add an answer-first section, write explicit definitions, and use structured comparisons with clear criteria. Where you make claims, tighten them with named sources and dates. For entity clarity, keep naming consistent and add "what it is / who it’s for / how it works" blocks near the top of key pages. That’s the practical side of Answer Engine Optimization; for more AEO tactics tied to grounding eligibility, see our AI features explained post.

Measure: Connect Citations to Outcomes

After any meaningful update, track AI referral traffic next to citation rate tracking. If citation rate rises but AI referral traffic stays flat, you’re probably in one of two situations: the AI answer fully satisfies the query (so there’s no reason to click), or the landing-page experience is pushing people away. Log major edits in your analytics stack, then give changes a 28-day window before you label them a win or a miss.

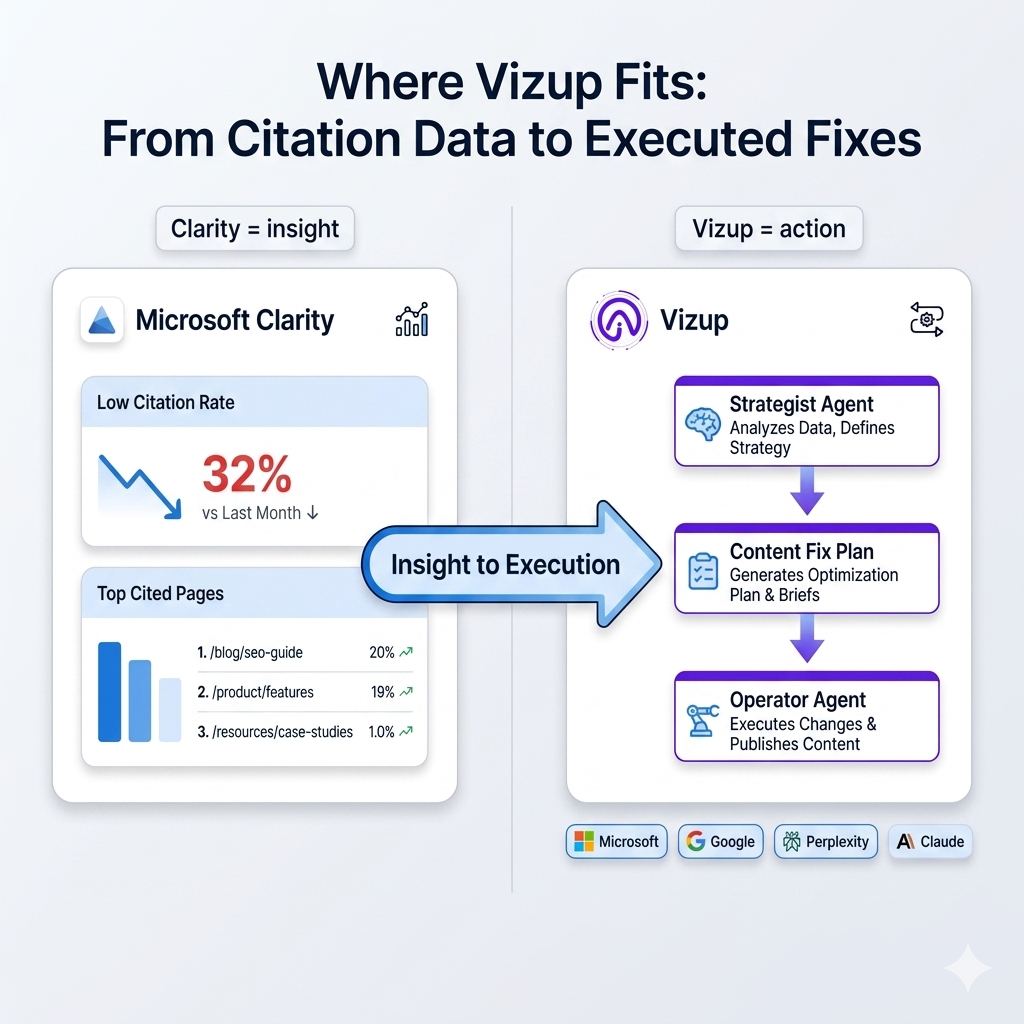

Where Vizup Fits: From Citation Data to Executed Fixes

Clarity tells you what’s happening. Vizup turns that into a plan you can execute. When Clarity highlights a page with a low citation rate, Vizup’s Strategist Agent identifies the content gaps and lays out a fix plan. Then the Operator Agent implements the changes (restructuring sections, adding definitions, tightening entity signals) without a string of manual handoffs between analytics and content.

There’s also a straightforward coverage gap. Clarity tracks Microsoft’s grounding ecosystem: Copilot and ChatGPT-via-Bing. It doesn’t monitor Google AI Overviews, Perplexity, or Claude. Vizup covers all four engines, acting as the cross-engine layer that fills what Clarity can’t see. If you need a complete picture of ai citations across major AI surfaces, Clarity and Vizup work together rather than competing.

Common Pitfalls: Avoid Misreporting AI Search Visibility

Three mistakes that consistently distort how teams report on AI citation performance:

- Treating ai citations as a direct conversion proxy. Citations capture grounding influence, not purchase intent. Back up any performance story with AI referral traffic before you attach revenue assumptions.

- Over-optimizing one page while ignoring topic coverage. One highly cited page doesn’t protect you if competitors own adjacent queries. Citation rate tracking across a topic cluster matters more than a single page’s score.

- Ignoring llm citations pointing to outdated pages. If an AI system cites stale pricing, deprecated features, or old policy language, users land with the wrong expectations. Review top cited pages monthly for accuracy.

Your First 30 Days With Microsoft Clarity AI Citations

The first month is mostly process: enable the feature, capture a baseline across all six views, pick your highest-priority citation-gap pages, ship structural fixes, then check AI referral traffic after 28 days. Microsoft Clarity AI Citations makes AI citation tracking free and broadly accessible at scale, replacing the old choice between expensive tooling and manual prompt simulation. If you need monitoring beyond Microsoft’s ecosystem, Vizup adds cross-engine coverage for Google AI Overviews, Perplexity, and Claude, plus an execution layer that turns citation insights into published improvements.

Frequently Asked Questions

Is Microsoft Clarity AI Citations actually free, and is it solid enough for reporting?

Yes. It’s available at no cost for Clarity users with a verified property. For reporting, it’s stronger than most alternatives because it uses grounded citation signals from real AI interactions rather than simulated prompts, per the Microsoft Clarity Blog (2026). For stakeholder updates, pair citation rate tracking with AI referral traffic so you’re showing both influence and outcomes.

How long until AI citations data shows up after enabling it?

If your site has moderate traffic and established Bing indexing, you’ll often see initial data within 7 to 14 days. Low-volume sites or newly tagged properties may need 3 to 4 weeks. To confirm the implementation, check the AI Platform channel group in Clarity’s acquisition view, if Clarity is recording sessions from AI sources, the tag is working and citation data should appear as query volume accumulates.

What’s the difference between AI citations and AI referral traffic in Clarity?

AI citations are grounding events, cases where an AI system used your content to build an answer, whether or not anyone clicked. AI referral traffic is what it sounds like: sessions that arrive at your site from an AI platform surface. A page can post a high citation rate and still generate low referral traffic if the AI answer resolves the query without a click. You need both metrics: citations show influence, traffic shows outcomes.

Does Microsoft Clarity AI Citations include Google AI Overviews, Perplexity, or Claude?

No. Clarity’s AI Citations covers Microsoft’s grounding ecosystem only: Copilot experiences and ChatGPT-via-Bing. Google AI Overviews, Perplexity, and Claude aren’t included. If you need cross-engine monitoring, Vizup tracks all four major AI platforms and adds an execution layer for acting on citation gaps across each engine.

How should I use citation rate tracking to choose which pages to update first?

Start with the high-traffic/low-citation cohort. Those pages already have demand, but they aren’t being selected as grounding sources, which makes them high-ROI targets. Compare their structure to your top cited pages and look for missing answer-first sections, fuzzy entity naming, or claims without support. Fix structure first before you expand with new content; improving established pages with indexing history typically moves citation rate faster than publishing net-new pages.

What to Read Next

For background on how grounding differs from standard indexing, see Bing Webmaster Tools documentation. For the broader strategy view, the AI search visibility 2026 playbook covers cross-engine optimization across major AI platforms.