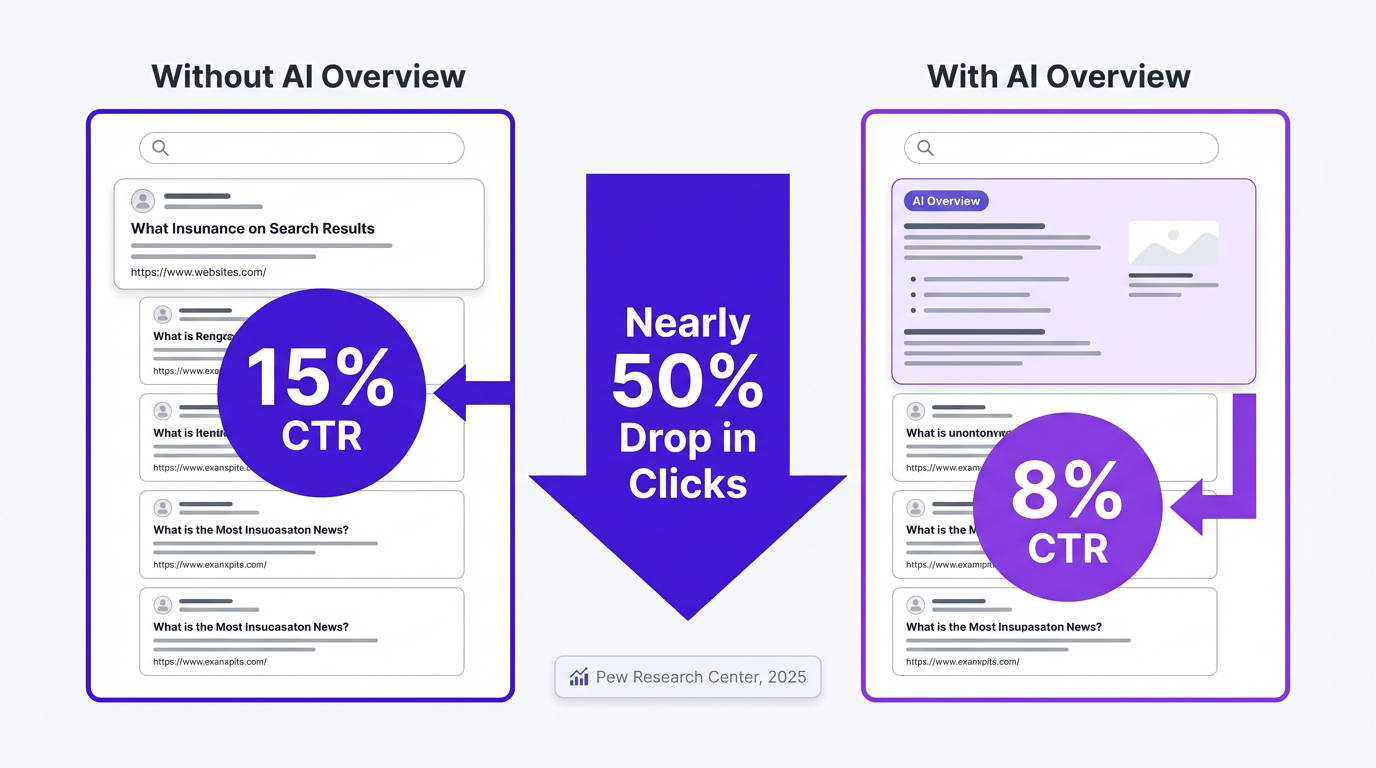

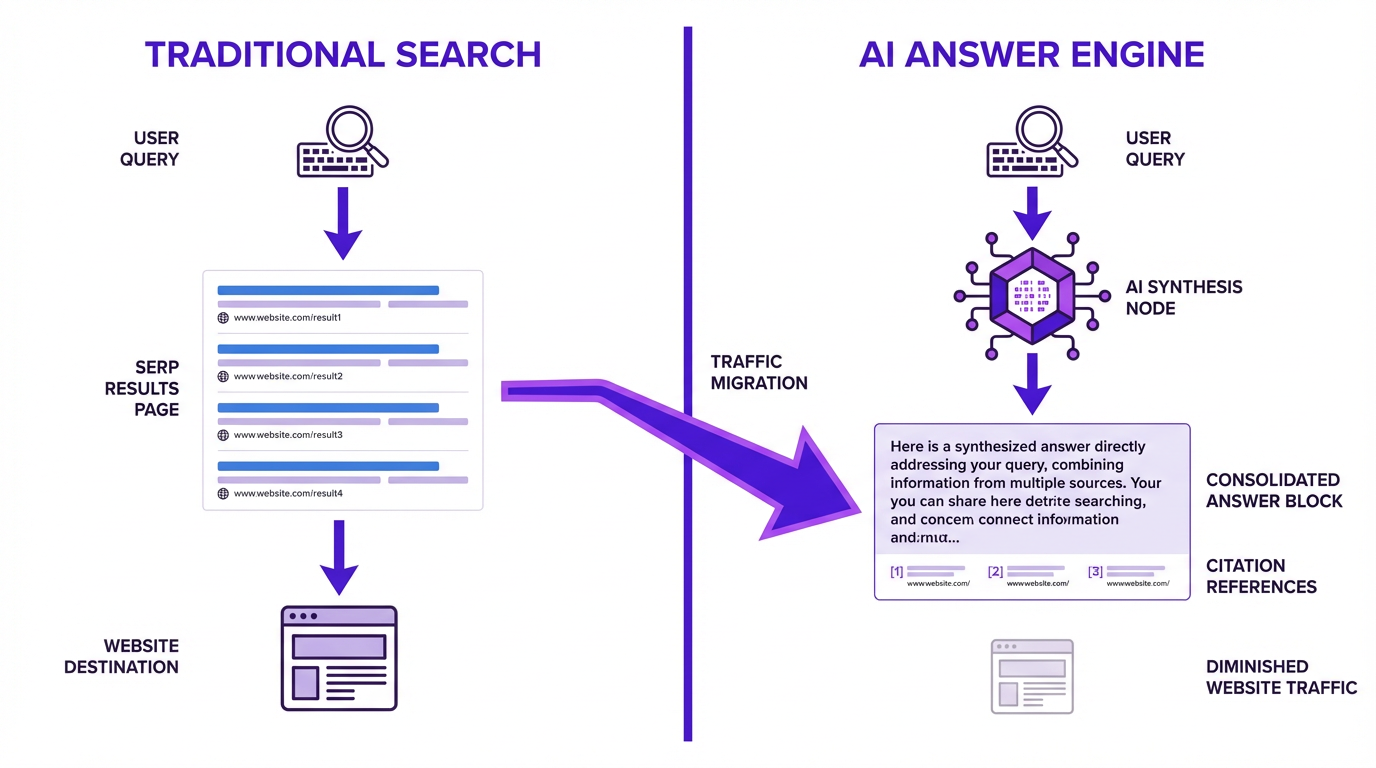

The shift from users scanning a list of links to receiving direct AI answers is a structural change in how people discover information online. A 2025 study from the Pew Research Center found that when an AI Overview appeared, users clicked on a traditional search result only 8% of the time, compared to 15% when no AI summary was present. That is a nearly 50% reduction in clicks, and it signals a fundamental redistribution of attention away from organic listings. With AI assistants now answering billions of queries across Google, Bing, ChatGPT, and Perplexity, brands that fail to appear inside these AI-generated responses risk becoming invisible to their audiences. This is exactly why answer engine optimization belongs at the center of every SEO strategy, not on the margins. To protect and grow their reach, marketing teams need to track brand presence in AI answers with the same discipline they apply to traditional SERP rankings, treating ai search visibility optimization as an ongoing, measurable priority.

This playbook is written for SEO leads, content teams, and growth marketers who have noticed organic traffic softening and want a concrete framework for what to do next. We cover the AI search landscape, audit methodology, a five-step optimization framework, common mistakes, and advanced tactics. The goal is a working system, not a conceptual overview. If you want the broader strategic context first, start with why answer engines are the new front page before returning here.

Table of Contents

Jump to the section most relevant to your situation:

- The AI Search Landscape Has Already Shifted Under Your Feet - why the answer layer is eating traditional visibility

- How Each AI Engine Decides What to Cite - retrieval logic for Gemini, Perplexity, ChatGPT, and Claude

- Comparison Table: What Drives Visibility Across AI Search Engines - side-by-side citation factor breakdown

- Auditing Your Current AI Search Visibility - a step-by-step baseline audit framework

- The AI Answer Optimization Framework: A 5-Step Playbook - the core repeatable process

- What Most People Get Wrong About AI Search Visibility Optimization - four expensive mistakes

- Advanced Tactics: Edge Cases and Expert-Level Moves - programmatic content, prompt engineering, llms.txt

- FAQ: AI Search Visibility Optimization - five direct answers to common questions

- Key Takeaways and Your Next 7 Days - recap and action plan

The AI Search Landscape Has Already Shifted Under Your Feet

One early study found that Google's Search Generative Experience could reduce organic traffic by 18% to 64% depending on the site's query mix. That was before AI Overviews became the default experience. The brands hit hardest were not the ones with weak SEO. They were the ones with strong informational content that AI engines now answer directly, without sending the click. Understanding the difference between GEO, AEO, and SEO is the first step to recognizing why your existing playbook may be producing diminishing returns.

Here is the blunt version: most brands are still optimizing exclusively for Google's blue links while the answer layer absorbs their visibility. This is the 2026 equivalent of ignoring mobile in 2015. The brands that recognized the mobile shift early restructured their entire content and UX approach and compounded that advantage for years. The same dynamic is playing out now, just faster. The work that gets brands ranking in organic search is largely the same work that gets them cited by AI, but Answer Engine Optimization (AEO) raises the stakes.

Already familiar with retrieval-augmented generation, grounding, and citation mechanics across AI engines? Skip ahead to the audit section. For everyone else, the next section breaks down exactly how each engine decides what to pull in.

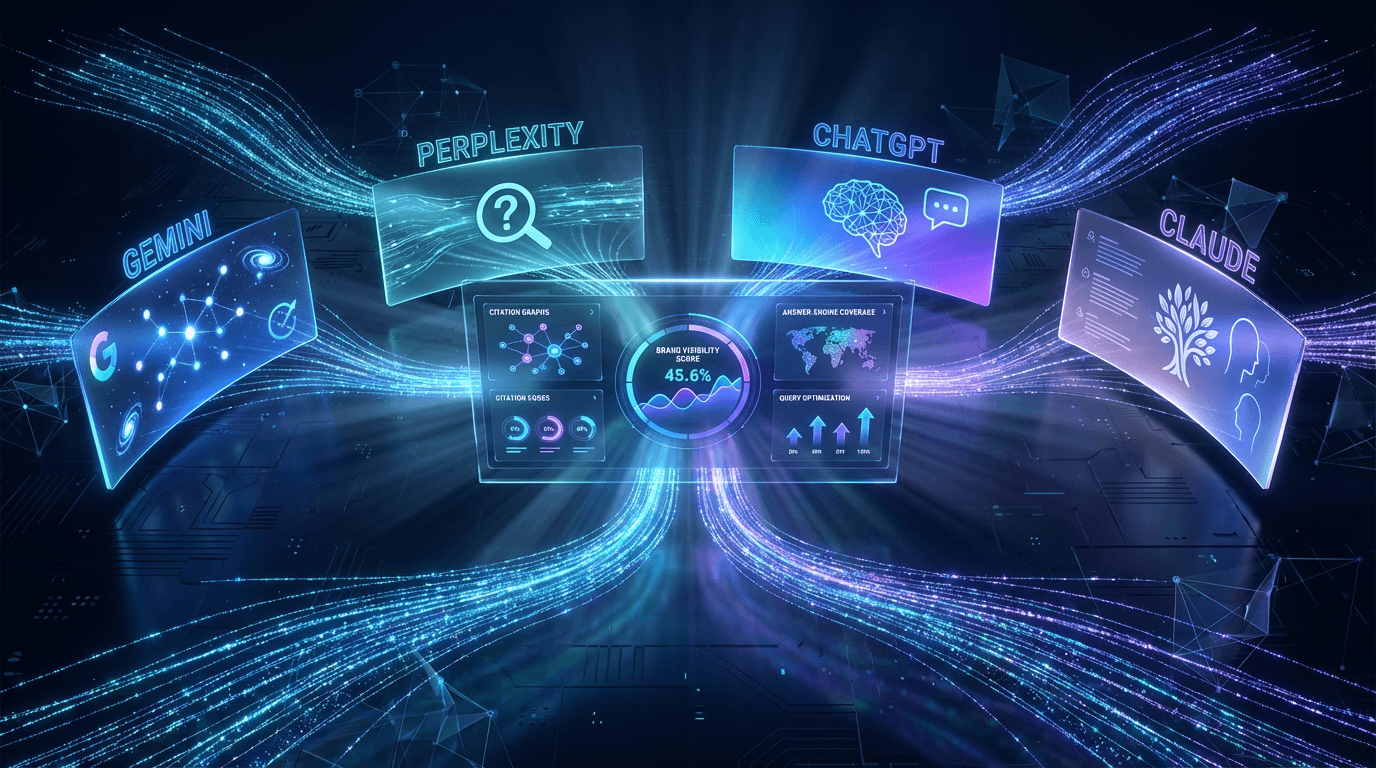

How Each AI Engine Decides What to Cite

AI search visibility optimization is not one strategy. It is four or five, because each engine weights authority, recency, structure, and source diversity differently. Treating them as interchangeable is one of the most common and costly mistakes practitioners make right now.

Gemini Visibility: Google's AI Overviews and the Knowledge Graph Advantage

Gemini leans heavily on Google's existing index and Knowledge Graph. Pages ranking organically in the top 10 have a meaningful shot at citation, but the correlation is weaker than most assume. Structured data closes that gap significantly: FAQ schema, HowTo schema, and Product schema all increase the likelihood of being pulled into AI Overviews because they give Gemini pre-parsed, machine-readable content blocks to work with. This aligns with the long-standing SEO practice of using schema markup to help Google understand page content, an investment that now pays dividends for AI visibility.

Recency matters more here than in other engines. Google re-crawls frequently, and Gemini reflects fresh content faster than any competitor. Publish a well-structured update to an existing page and Gemini can surface it within days.

Perplexity Visibility: The Citation-Heavy Research Engine

Perplexity cites more sources per answer than any other engine, often 8 to 15 per response. That creates an unusual opening: even mid-authority sites can earn visibility if their content is specific, well-structured, and topically precise. Perplexity's index combines real-time web crawling with content partnerships, so freshness matters enormously. A piece published this week can appear in answers within 24 to 48 hours if it is structured correctly.

One B2B SaaS brand restructured their comparison pages by leading each section with a direct answer sentence, adding original benchmark data, and publishing weekly. Within eight weeks, they went from zero Perplexity citations to appearing in roughly 30% of category queries. The content was not dramatically longer. It was more extractable and more specific.

ChatGPT Visibility and Claude Visibility: The Conversational Engines

ChatGPT with browsing enabled and Claude with web access behave more like research assistants than search engines. They synthesize across multiple sources and sometimes skip citations unless the user asks, though newer versions increasingly include them. Brand mentions in training data matter more here than anywhere else: if your brand is discussed frequently across forums, publications, and documentation, these models carry that knowledge into their responses even without a live web lookup. You can use Vizup's AI Crawler Checker to verify your content is accessible to these models.

Answer Engine Optimization (AEO) is the practice of structuring content to be understood, referenced, and recommended by LLMs like ChatGPT in response to user questions. Leading platforms like Vizup now offer AEO tools to help marketers improve visibility as AI referral traffic grows. The practical implication is straightforward: publish content that answers questions in a self-contained, quotable way. Tracking which content pieces are used in AI responses is crucial, and engines favor passages they can extract cleanly and synthesize without ambiguity.

Comparison Table: What Drives Visibility Across AI Search Engines

| Factor | Gemini | Perplexity | ChatGPT (Browse) | Claude (Web) |

|---|---|---|---|---|

| Source Authority Weight | Very High | Medium | High | High |

| Recency Sensitivity | High | Very High | Medium | Medium |

| Structured Data Impact | Very High | Low | Low | Low |

| Avg. Citations Per Answer | 3-5 | 8-15 | 2-4 | 2-5 |

| Preferred Content Format | How-to, FAQ, authoritative guides | Comparison, listicle, data-rich pages | Self-contained explanatory passages | Detailed, well-sourced explanations |

| Crawl / Index Method | Google index + Knowledge Graph | Real-time crawl + partnerships | Bing index + live browse | Live web access on request |

| Best Content Type to Target | Structured how-to with schema | Specific comparison and original data pages | Quotable, self-contained answer blocks | Thorough, well-cited explanations |

| Factors based on publicly documented behavior and practitioner reverse-engineering as of Q1 2026. No single optimization covers all four engines. |

The takeaway from this table: there is no universal optimization. A page perfectly tuned for Gemini (structured data, top-10 organic rank, FAQ schema) may still be invisible in Perplexity if it lacks original data and topical specificity. That is exactly why continuous monitoring across engines is not optional. It is the only way to know where you actually stand.

Auditing Your Current AI Search Visibility (Before You Optimize Anything)

Start with your 20 to 30 highest-value queries: the informational and comparative queries where your brand should be authoritative. Run each one through Gemini, Perplexity, ChatGPT, and Claude. Record three outcomes for each: cited (your URL appears), mentioned (your brand name appears without a link), or absent (no trace of your brand). Categorize results by engine and query type. One pass gives you a clear map of where you have visibility and where you have none.

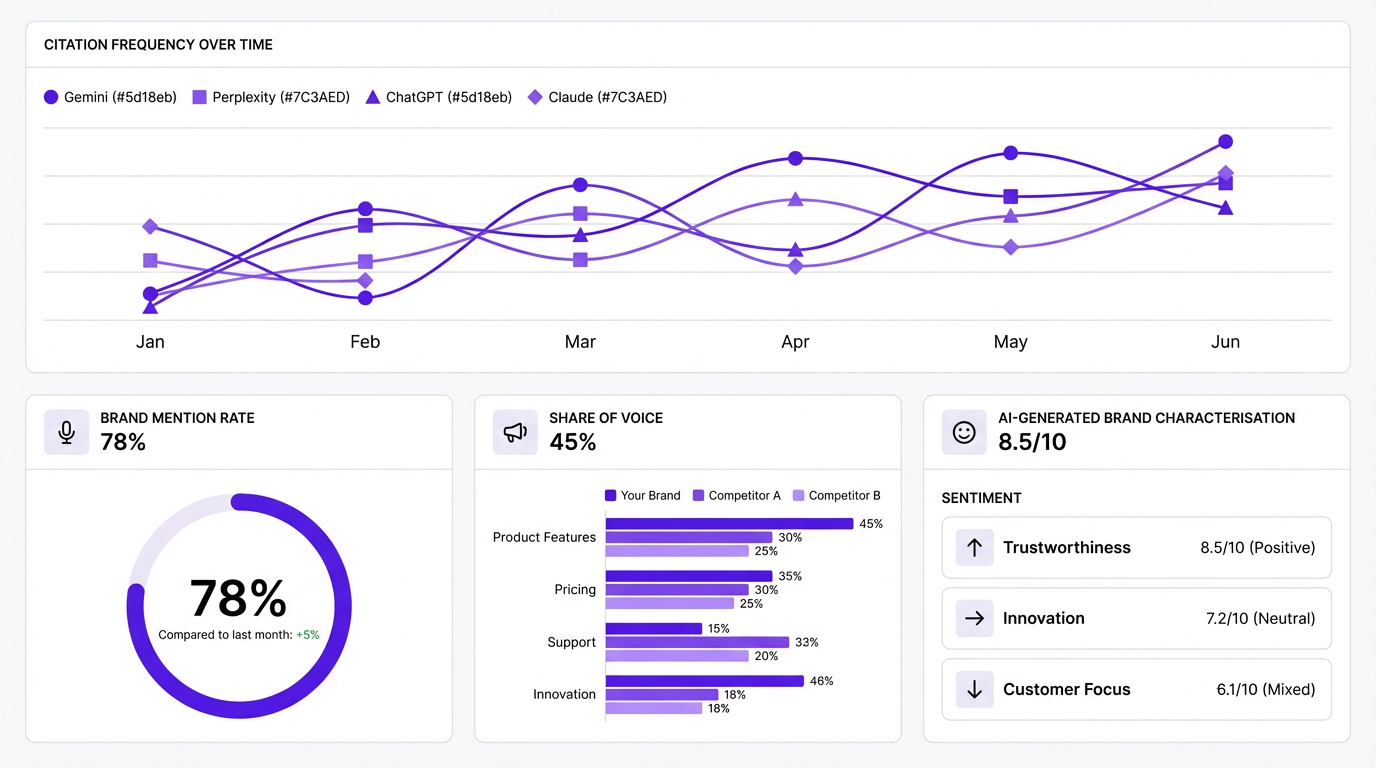

Manual auditing works for a first baseline but does not scale. Running 30 queries across four engines weekly is 120 manual checks, and that is before you factor in competitor tracking. Vizup's Answer Engine Monitoring automates exactly this workflow, tracking citation frequency, brand mention rate, and share of voice across engines continuously. You can learn more about how to optimize for AI-powered answer engines and what the monitoring layer looks like in practice. AEO is a new discipline that works differently from traditional SEO. AEO requires specific techniques beyond standard SEO, and traditional rankings do not guarantee AI answer inclusion.

The AI Answer Optimization Framework: A 5-Step Playbook

Treat this as a repeatable process, not a one-time project. Each step builds on the previous one. Skipping steps is how brands end up with content that looks optimized but produces no measurable AI visibility improvement.

Step 1: Map Your Query Universe by AI Intent Type

Not all queries trigger AI answers equally. Informational and comparative queries generate AI responses most reliably. Transactional queries, for now, still lean toward traditional results. Sort your query list against the framework below before writing a single word of new content:

| Query Type | Example | Most Active AI Engine | Optimization Priority |

|---|---|---|---|

| Discovery | What is answer engine optimization? | ChatGPT, Claude | High |

| Evaluation / Comparison | Best AI search visibility tools 2026 | Perplexity, Gemini | Very High |

| How-to | How to optimize content for Gemini AI Overviews | Gemini, Perplexity | Very High |

| Brand | Is Vizup good for AI monitoring? | All engines | High |

| Map your existing content library against these categories before deciding where to invest optimization effort. |

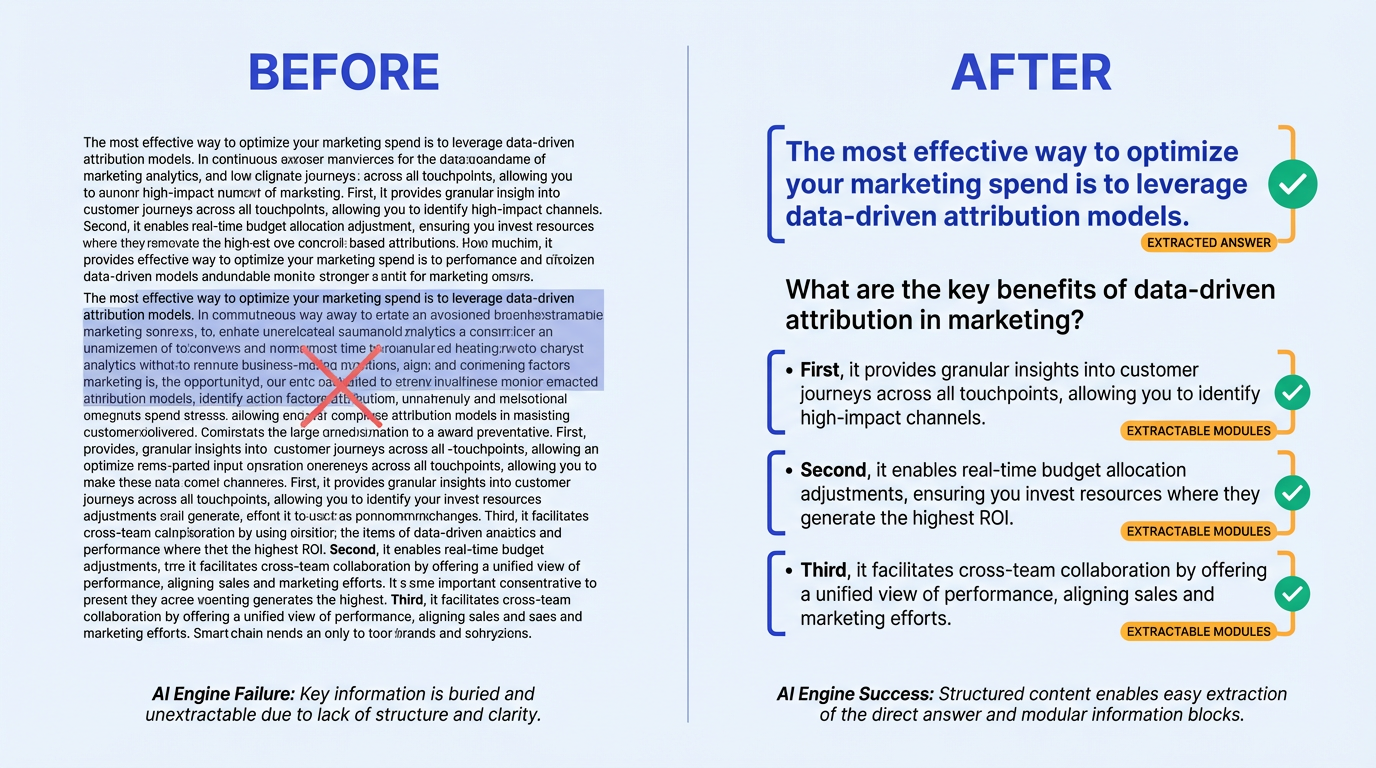

Step 2: Restructure Content for Extractability

AI engines do not read your page the way a human does. They extract passages. Your content needs to be built in modular, self-contained blocks that can stand alone when pulled out of context. Consider the difference between these two openings:

Before: 'There are many factors that go into how search engines determine which content to surface, and understanding these factors requires looking at the history of how search has evolved over the past two decades, including the shift from keyword matching to semantic understanding.' After: 'AI search engines prioritize content that is specific, well-structured, and self-contained. Lead each section with a direct answer, keep paragraphs under four sentences, and use subheadings that mirror natural language queries.' The second version is extractable. The first is not.

Formatting tactics that improve extractability:

- Lead every section with a direct answer sentence, not a context-setting preamble

- Use definition-style openings for concept explanations (e.g., 'AI search visibility optimization is the practice of...')

- Keep paragraphs under four sentences

- Write subheadings that mirror natural language queries rather than creative labels

- Never bury the key fact in the middle of a long paragraph

Step 3: Build Entity Authority, Not Just Page Authority

AI engines increasingly think in entities, not keywords. Your brand, your authors, and your products need to exist as recognized entities across the web, not just on your own domain. A high domain authority score means little if Gemini's Knowledge Graph does not have a coherent entity record for your brand. This aligns with the idea that AEO forces you to think about how your content is reassembled into an answer, which is different from simply ranking in a list. The tactical checklist here is specific:

- Claim and optimize your Google Knowledge Panel with accurate, consistent information

- Ensure consistent entity descriptions across Wikipedia, Wikidata, Crunchbase, and LinkedIn

- Get mentioned (not just linked) in authoritative third-party publications

- Publish original research that gets cited by other sources, creating an entity-level citation trail

- Align author bylines with recognized expert profiles that carry their own entity presence

Step 4: Earn Citations Through Original Data and Unique Perspectives

This is the hardest step and the one most competitors skip. AI engines preferentially cite sources that contain information unavailable elsewhere: original surveys, proprietary benchmarks, unique frameworks, first-person case studies. If your content is a rewrite of the top five Google results, no AI engine has a reason to cite you. You are training data, not a source.

Visitors arriving from AI search queries already convert at significantly higher rates than traditional organic search visitors. The audience you earn through original, citable content is disproportionately valuable. Invest accordingly.

Step 5: Monitor, Measure, and Iterate with AI Search Visibility Tools

Traditional SEO metrics (rankings, impressions, CTR) do not capture AI search visibility. You need a separate measurement layer tracking citation frequency per engine, brand mention rate in AI answers, share of voice across your query universe, and sentiment of AI-generated brand mentions. To benchmark your LLM visibility against competitors, you need tooling that runs queries at scale and records structured outputs. Vizup provides a comprehensive suite for exactly this purpose, giving marketers visibility into where their business appears in answer engines.

| Feature | Vizup |

|---|---|

| Multi-Engine Monitoring | Yes (Gemini, Perplexity, ChatGPT, Claude) |

| Citation Tracking | Yes |

| Real-Time Alerts | Yes |

| Competitor Benchmarking | Yes |

| Reporting / Export | Full export + dashboard |

| Primary Use Case | AI answer monitoring + AEO |

| Pricing Tier | See Vizup pricing |

| Tool capabilities based on publicly available product pages as of Q1 2026. For a broader comparison, see the best AI visibility tools roundup. |

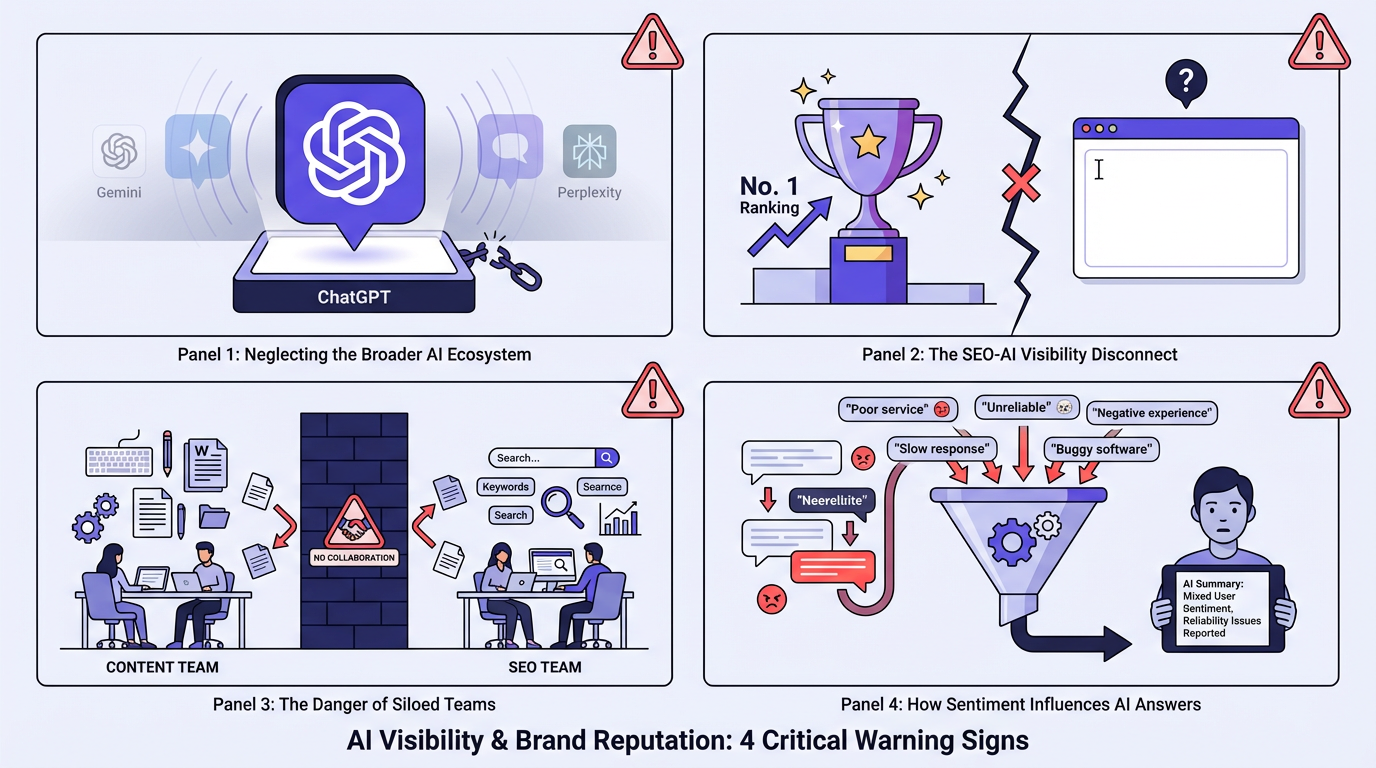

What Most People Get Wrong About AI Search Visibility Optimization

The fastest-moving brands are not running AI answer optimization as a separate channel. They have folded it into the existing content workflow: every new piece of content gets evaluated for extractability and entity clarity before it publishes, not retrofitted six months later. As Jason Bland argued in Forbes (2026), you should not treat answer engine optimization as a separate discipline from SEO, because most of what people call AEO is just competent SEO with a new label. Teams that treat it as a parallel program consistently fall behind.

ChatGPT gets the press coverage, but Gemini drives far more search volume because it is embedded in Google Search, which still handles the vast majority of daily queries. Deprioritizing Gemini means ignoring the largest AI-influenced search surface by a significant margin.

Ranking number one on Google does not guarantee citation in AI answers. The overlap is real but weaker than people expect. Practitioners consistently observe roughly 40-60% overlap between top organic results and AI citations, not the 95% many assume. AI engines pull from a wider source pool and weight factors like content specificity and entity authority that traditional ranking algorithms treat differently.

Finally: AI engines do not just cite you. They characterize you. If the dominant third-party content about your brand skews negative, that characterization gets synthesized into AI answers about your category. Monitoring sentiment in AI-generated brand mentions is not a vanity exercise. It is a direct signal of whether your entity authority is working for you or against you.

Advanced Tactics: Edge Cases and Expert-Level Moves

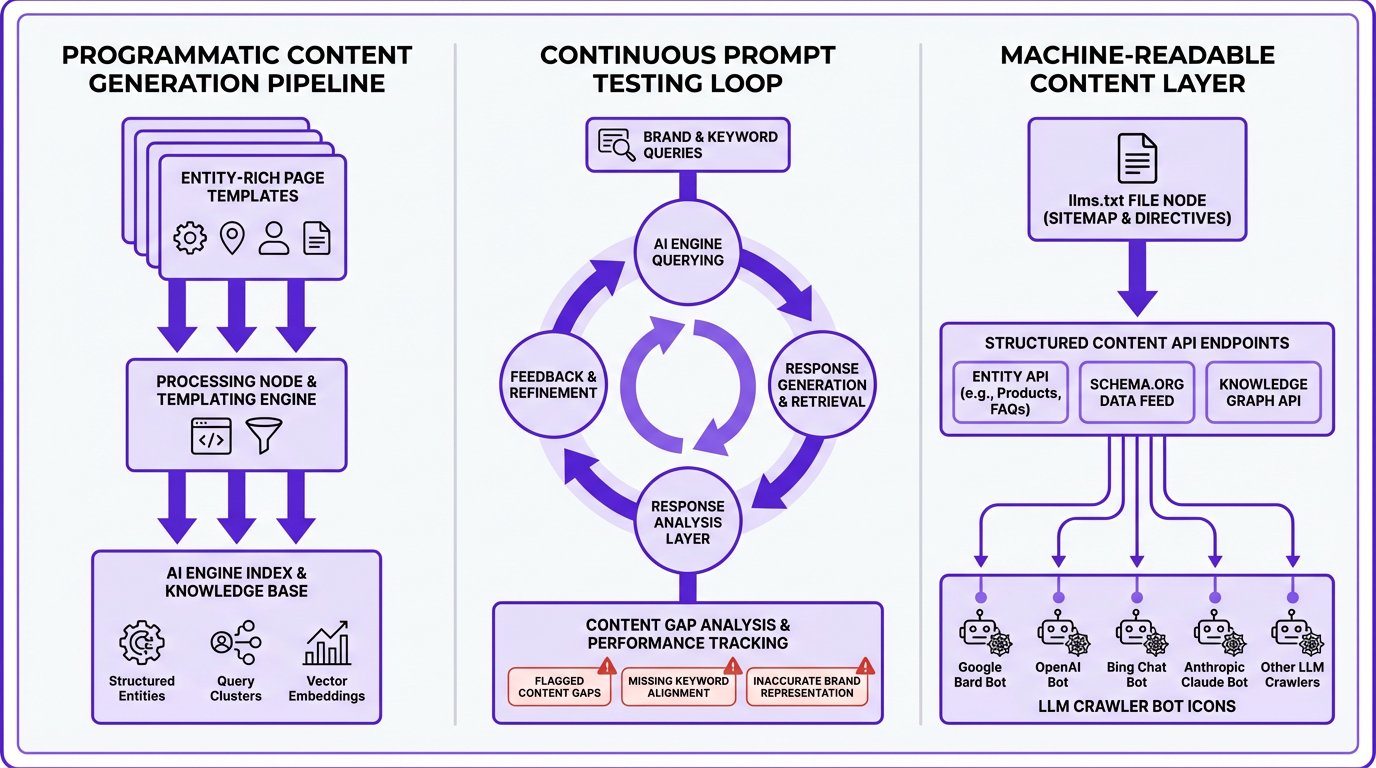

Programmatic content at scale remains one of the most underused tactics for AI visibility. The goal is to create hundreds of entity-rich, query-specific pages that AI engines can draw from across your topic cluster. The risk is thin-content penalties from Google. The solution is a template architecture that enforces minimum original content requirements per page: a unique data point, a unique example, a unique entity context. Pages meeting this threshold are both thin-content-safe and AI-indexable.

Running your target queries through each AI engine weekly and recording the exact language each engine uses to describe your brand, your category, and your competitors is a practice more forward-thinking teams are now formalizing. When the characterization drifts negative or inaccurate, trace it back to the third-party content being cited and address it at the source. This is reputation management for the AI layer, a discipline that barely existed 18 months ago. For teams building a full system, building an AI-powered SEO strategy from scratch covers the broader infrastructure decisions.

Worth watching closely: the emerging role of AI-specific sitemaps and machine-readable content layers. Some brands are already implementing llms.txt files (analogous to robots.txt but designed to guide LLM crawlers) and structured content APIs that serve clean, machine-readable versions of their content on request. This is early-adopter territory, but the brands investing in it now will have a meaningful head start when AI crawlers standardize their behavior.

FAQ: AI Search Visibility Optimization

Is AI search visibility optimization different from traditional SEO?

Yes, meaningfully so. Traditional SEO optimizes for ranking signals that influence where a page appears in a SERP. AI search visibility optimization focuses on whether your content gets extracted, cited, or synthesized by AI answer engines. The technical inputs overlap, but as one perspective notes, AEO is not entirely separate from competent SEO; it builds upon its principles of authority and structure. The outputs and measurement methods are entirely different. This requires specific techniques beyond standard SEO.

Which AI search engine should I prioritize for visibility in 2026?

Start with Gemini if you are resource-constrained. It is embedded in Google Search, which handles the highest query volume by far. Perplexity is the second priority because it cites the most sources per answer, giving mid-authority sites a realistic path to visibility. ChatGPT and Claude matter most for brand awareness and training data presence, which compounds over time but is harder to measure directly.

How do I check if my brand is being cited in AI-generated answers?

Manually, you can run your 20 to 30 highest-value queries through each AI engine and record citation status. At scale, you need an automated monitoring tool. Vizup's Answer Engine Monitoring tracks citation frequency, mention rate, and share of voice across Gemini, Perplexity, ChatGPT, and Claude continuously. For a broader view of available tools, there are several roundups covering the current category.

Can small businesses compete for AI answer visibility against large brands?

Yes, more effectively than in traditional SEO. Perplexity in particular cites 8 to 15 sources per answer, which means topical specificity and content freshness matter more than domain authority. A small brand with highly specific, well-structured comparison pages and original data can earn Perplexity citations in competitive categories. The advantage large brands have in traditional SEO (link authority, brand recognition) is partially offset by AI engines' preference for specificity over scale.

How long does it to see results from AI answer optimization efforts?

Perplexity is the fastest engine to respond: well-structured, freshly published content can appear in answers within 24 to 48 hours. Gemini typically reflects content changes within days to a couple of weeks, depending on crawl frequency. ChatGPT and Claude are slower because their training data cycles are less frequent, though browsing-enabled versions can surface new content faster. Most teams see measurable citation improvement within four to eight weeks of systematic optimization.

Key Takeaways and Your Next 7 Days

The 5-step framework in one sentence each:

- Step 1: Map your query universe by intent type so you know which queries actually trigger AI answers.

- Step 2: Restructure your content for extractability by leading with direct answers and keeping paragraphs modular.

- Step 3: Build entity authority across Wikipedia, Wikidata, Crunchbase, and third -party publications so AI engines recognize your brand as a known entity.

- Step 4: Earn citations through original data, proprietary benchmarks, and unique frameworks that AI engines cannot find elsewhere.

- Step 5: Monitor, measure, and iterate using AI search visibility tools that track citation frequency and brand mention rate across all four engines.

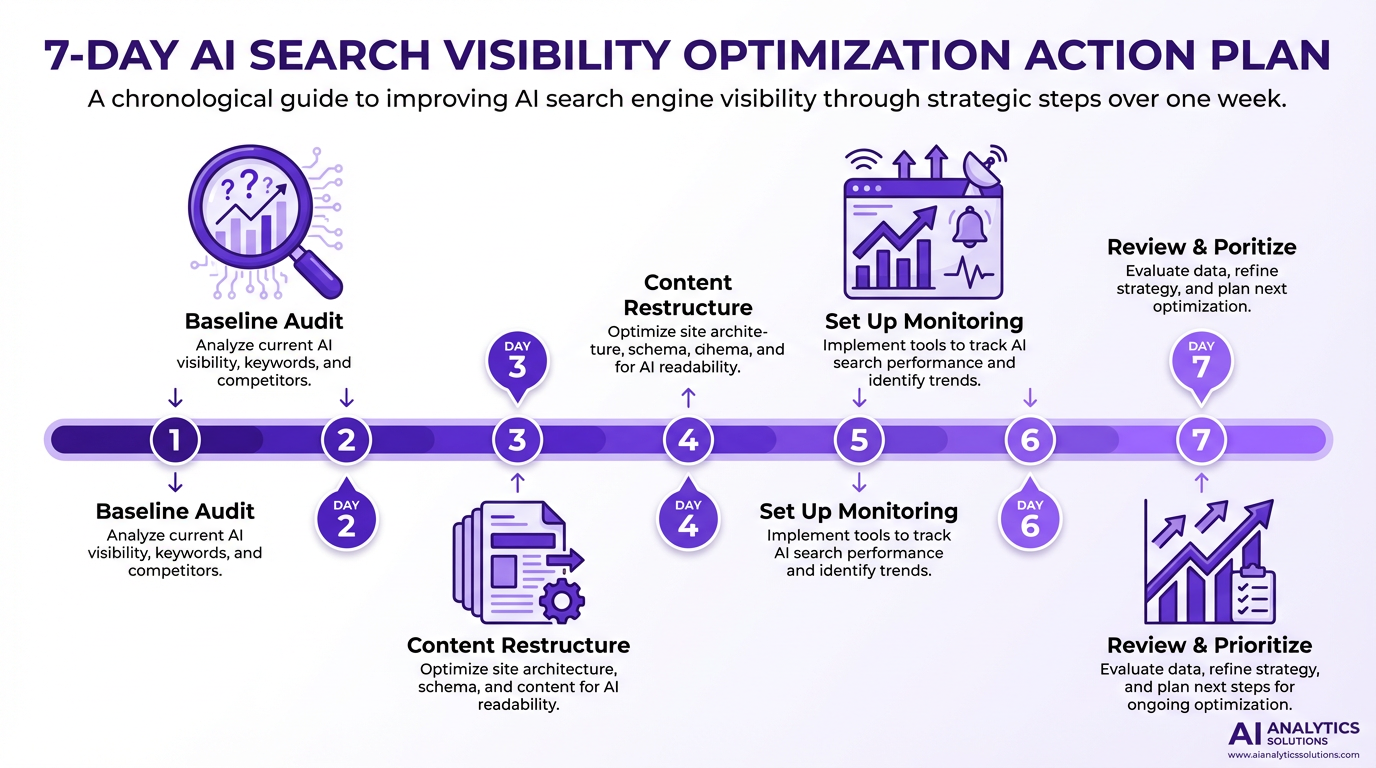

Your 7-day action plan: Days 1 and 2, run your baseline audit across Gemini, Perplexity , ChatGPT, and Claude for your 20 to 30 highest-value queries. Record citation status in a simple spreadsheet. Days 3 and 4, take your top five pages by traffic or business value and restructure them for extractability using the formatting tactics from Step 2. Days 5 and 6, set up continuous monitoring with an AI search visibility tool so you stop auditing manually. Day 7, review your initial monitoring data, identify the three queries with the biggest gap between your current citation rate and where you should be, and prioritize those for the next content sprint.

AI search is not replacing traditional search. It is layering on top of it. As of Q1 2026, Google still handles the vast majority of daily queries while AI assistants collectively handle billions. Both layers matter, and both are growing. The brands that win will be monitoring and optimizing both simultaneously. The infrastructure to do that exists today. The only real question is whether your team builds it now or spends the next year catching up.