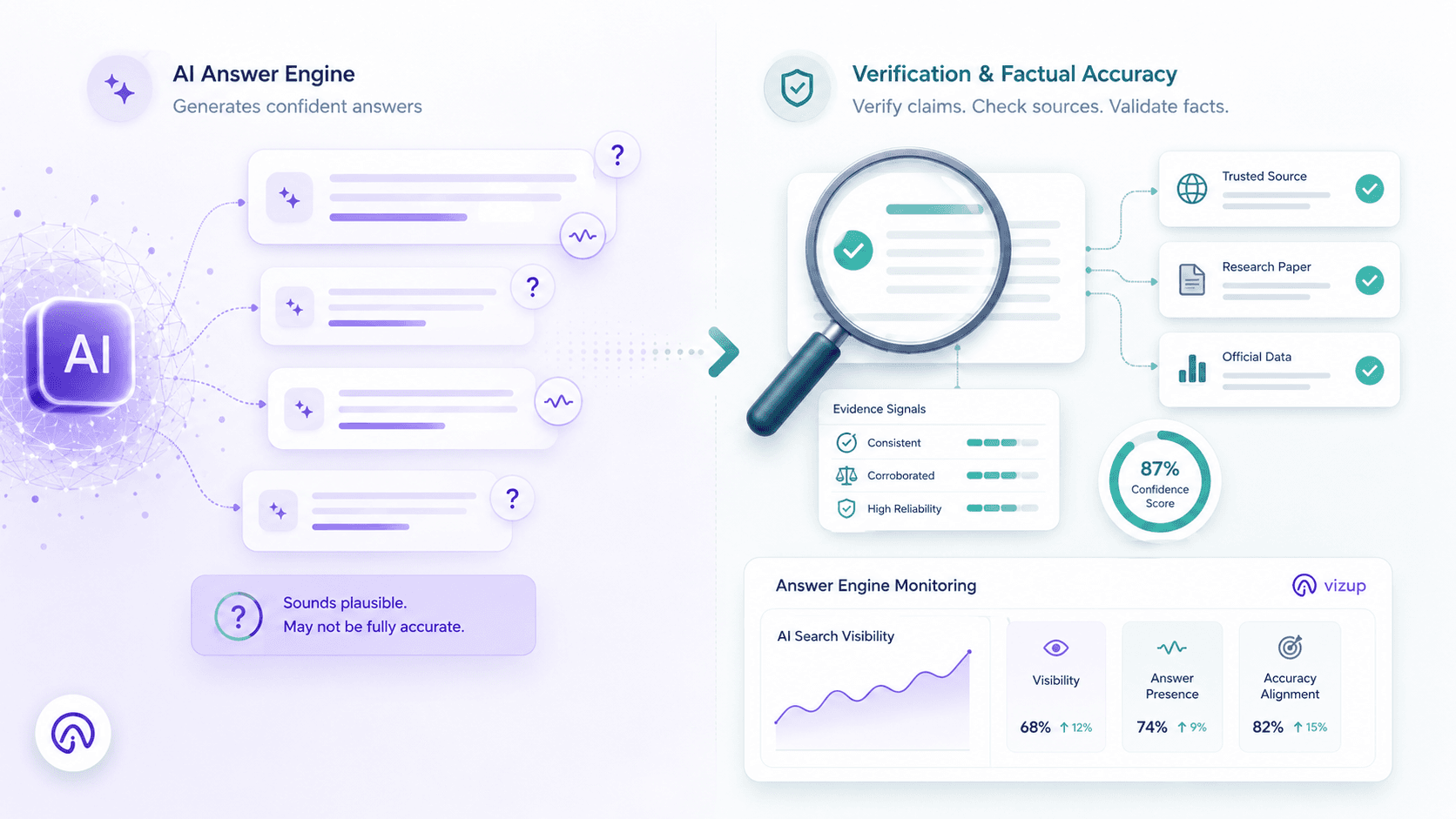

Google ALDRIFT (Algorithm Driven Iterated Fitting of Targets) is a research framework meant to make AI-generated answers more factually reliable by training models to notice, then correct, their own plausible-but-wrong statements. Instead of rewarding output that merely reads well, ALDRIFT targets the "plausibility problem": the tendency of AI to state misinformation with confidence.

You have seen the failure mode: an AI answer that scans as correct, sounds natural, and projects authority, yet falls apart on inspection. That gap is what Google is trying to close with ALDRIFT, and it matters for marketers, SEO strategists, and content leads. As Google’s AI gets sharper at flagging factual drift, the floor for content quality and verifiability rises with it. The framework, detailed in a Search Engine Journal analysis by Roger Montti (May 2026), points to a future where “sounds right” stops being a pass.

The 'Plausibility Problem': Why AI Answers Need a Reality Check

Large language models generate what’s statistically likely next. They’re trained to predict word sequences from patterns in huge datasets, which makes them great at fluent, confident prose. It does not make them reliable fact-checkers. That’s the Google AI plausibility problem in practice: answers that read authoritative while smuggling in fabricated citations, wrong dates, or just-off claims.

The fallout isn’t theoretical. When AI Overviews or chatbots get details wrong about a brand, a medical condition, or a financial product, trust drops for both the AI surface and the sources it cites. An April 2026 accuracy analysis of AI Overviews conducted byAI startup Oumi for The New York Times reported measurable error rates in factual claims shown through generative search results. That level of scrutiny helps explain the ALDRIFT direction: build systems that catch their own errors before users have to.

Info: The plausibility problem isn’t a quirk of one model. It follows from how current LLMs produce text, which is why frameworks like ALDRIFT look like an evolution of the stack, not a quick fix.

How Does the Google ALDRIFT Framework Actually Work?

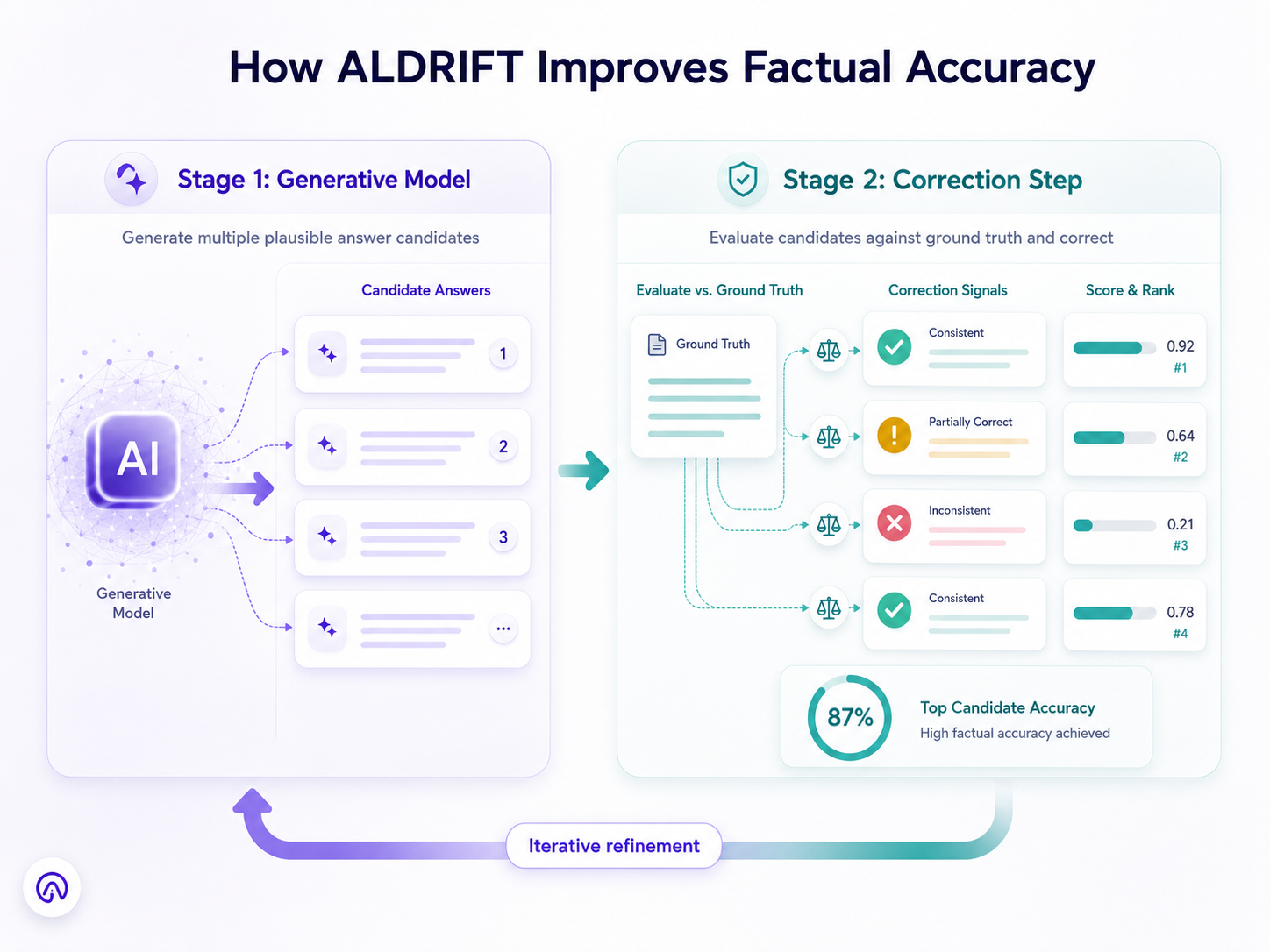

ALDRIFT isn’t a single model; it’s a two-stage training setup for adaptive generative models. A useful mental picture is a writer working alongside a relentless, domain-savvy editor. The writer drafts answers optimized for fluency and coverage. The editor scores those drafts against known truths, and the writer updates based on the corrections. After enough cycles, the writer starts anticipating the editor, producing answers that self-correct earlier in the process.

Stage 1: Generating Plausible (But Potentially Flawed) Answers

ALDRIFT starts by having a generative model produce multiple candidate answers to a prompt. These candidates are tuned to sound correct and natural, basically the same strength current LLMs already have. This is the productive, occasionally unreliable phase: the model samples across the plausible answer space based on its training. The research term you’ll see here is “coarse learnability.” It means the model doesn’t have to hit the ideal target immediately, but it does need to cover enough of the right territory that strong candidates aren’t thrown out early (Search Engine Journal, 2026).

Stage 2: The 'Drift' Correction and Self-Critique

Stage two brings in an external scoring step that checks each candidate against “ground truth,” or a set of known facts. The model is trained to spot where an answer drifts (subtly or dramatically) from what’s correct, and to move away from the plausible-but-wrong versions toward the accurate ones. The loop matters: repeated refinement pushes the generative model toward lower-cost (more accurate) answers while the correction step limits error buildup over iterations. The arXiv paper “Sample-Efficient Optimization over Generative Priors via Coarse Learnability” lays out the math behind the idea, arguing that this correction loop helps prevent a model from compounding its own biases across successive rounds.

The Google Research paper says the ALDRIFT framework “opens exciting avenues for future research” and points “toward a principled foundation for adaptive generative models” (Search Engine Journal, 2026). If you’re trying to keep up with how AI is changing SEO, this is the shift to watch: generation optimized for accuracy, not just fluency.

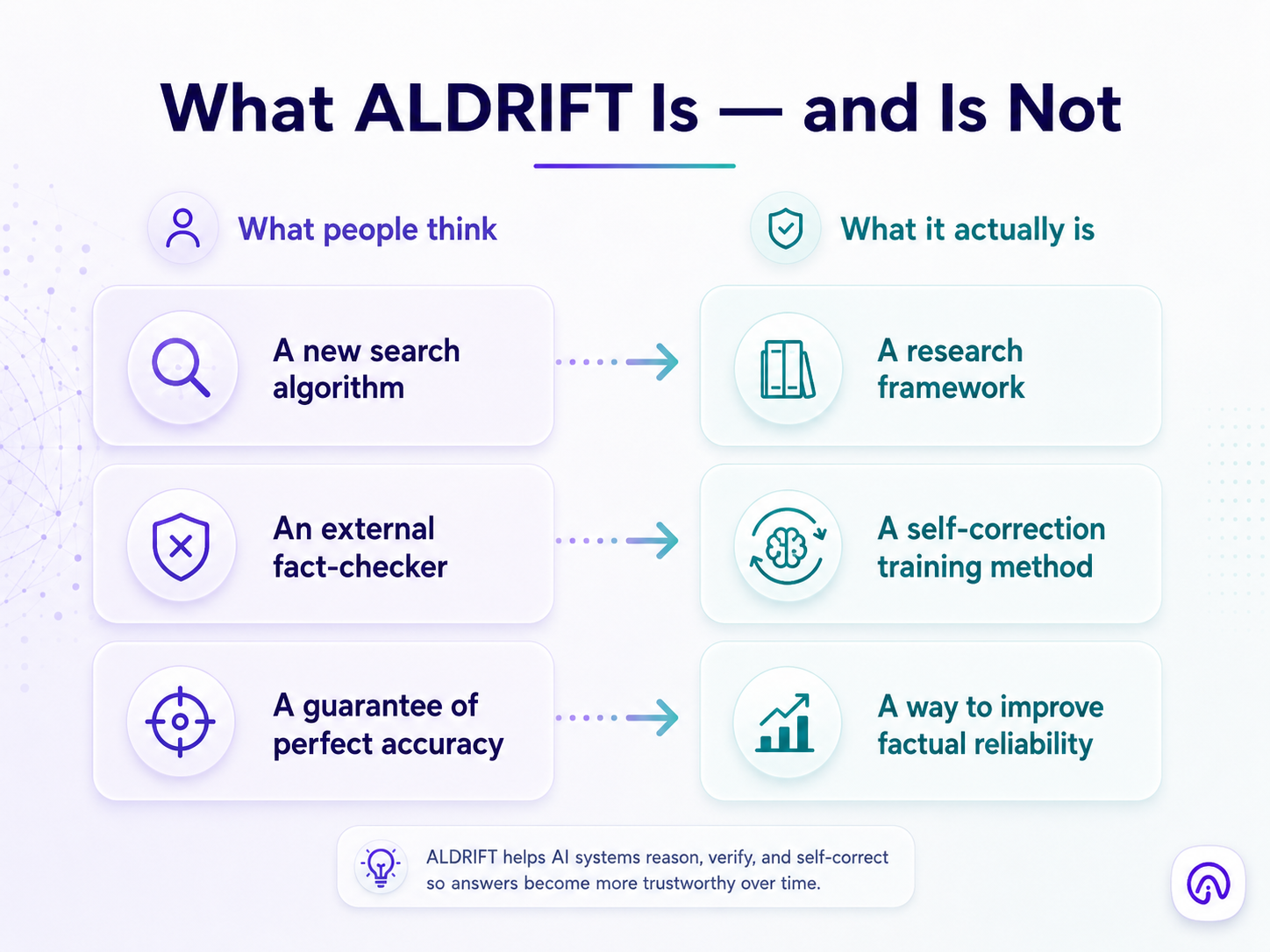

What ALDRIFT Is NOT: Correcting Common Misconceptions

It is not a new search algorithm (yet). ALDRIFT is research, not a live update in the mold of Penguin or Panda. The ideas can shape future systems, but there’s no “ALDRIFT update” rolling into search results today. Treating it like a ranking factor is jumping ahead of the evidence.

It is not just about fact-checking. One option is to bolt a fact-checker onto an LLM after the answer is generated. ALDRIFT is more ambitious: it trains the model to be more factual by wiring correction into the training loop itself. The aim is fewer errors at the source, not a cleanup pass after the model has already committed to a claim.

It is not a guarantee of 100% accuracy. The research is about meaningfully improving the accuracy of AI answers Google ships, not promising perfection. In other words: less wrong, more often. For content teams, that nuance matters, the standard is trending toward “provably reliable,” not “never mistaken.”

The SEO Impact: Preparing for a More Fact-Conscious Google

The adaptive generative models SEO impact is easy to map: as AI gets better at telling verified information from confident filler, verifiable, well-sourced material gains leverage. If you’re thinking about ALDRIFT framework SEO, the signals start shifting away from keyword density and broad topical coverage alone and toward demonstrable accuracy and citation quality.

Generative Engine Optimization pushes GEO factual accuracy signals to the front of the queue. Clear sourcing, author expertise aligned with E-E-A-T principles, and checkable data points become the currency of AI-powered answer engines. Content that’s mostly a remix (re-spun from other pages without original verification) stands a higher chance of getting ignored in generative answers. The AI Search Visibility Optimization playbook for 2026 spells out what that shift looks like in day-to-day execution.

| Content Tactic | Old SEO (Plausibility-Focused) | New SEO (Accuracy-Focused) |

|---|---|---|

| Keyword Strategy | Stuff keywords for density | Semantic relevance anchored to verifiable claims |

| Content Volume | Publish more to rank more | Per-page verifiable accuracy outweighs raw volume |

| Linking Approach | Internal links to spread crawl equity | External citations to authoritative primary sources |

| Expertise Signals | Generic E-A-T boilerplate | Demonstrable expertise via author credentials and original data |

| The shift from plausibility-optimized content to accuracy-optimized content under frameworks like ALDRIFT. |

Thinking beyond traditional SEO is becoming table stakes. If search systems are trained with ALDRIFT-style correction loops, they’ll keep getting better at detecting low-quality content, and the usual shortcuts get more expensive. The ability to structure URLs for AI search and present information in machine-parseable formats becomes another practical edge.

Your 5-Point Content Checklist for the ALDRIFT Era

These five moves match the direction ALDRIFT points to on factual accuracy, and you can implement them now, no need to wait for any research concept to show up in production.

- Prioritize Verifiable Claims. Link to primary sources, studies, and datasets. When you make a claim, attach a named source and a date. Accuracy-first systems punish unsupported assertions first.

- Cite with Precision. Skip the homepage link when a specific report, page, or section is what supports your statement. Granular citations read as more trustworthy to humans and are cleaner signals for machine evaluators.

- Structure for Clarity. Use structured data (FAQ schema, How-to schema, plus clear heading hierarchies) so facts are explicit. Generative systems can’t cite what they can’t parse.

- Audit for Accuracy. Re-check cornerstone pages for outdated stats, broken source links, and claims that have been superseded. Factual decay is predictable, and it quietly erodes credibility.

- Showcase Expertise. Strengthen E-E-A-T signals with detailed author bios, transparent about pages, and original research. Trust is earned with specifics, not boilerplate.

Key Takeaways

- Google ALDRIFT is a research framework (not a live algorithm) that trains AI to self-correct plausible but inaccurate answers through iterative refinement.

- It uses a two-stage loop: generate multiple candidate answers, then score and correct them against known facts.

- ALDRIFT targets the plausibility problem in LLMs, where statistical likelihood gets mistaken for truth.

- For SEO and GEO teams, the direction is unmistakable: verifiable accuracy, precise citations, and demonstrable expertise are becoming requirements, not nice-to-haves.

- Content that only sounds authoritative (without factual grounding) gets riskier as adaptive generative models improve.

Frequently Asked Questions about Google ALDRIFT

Is Google ALDRIFT part of the live search algorithm now?

No. ALDRIFT is a Google Research framework, not a deployed search algorithm update. It may shape future AI systems, but there’s no “ALDRIFT update” changing rankings today.

How is ALDRIFT different from Google's E-E-A-T guidelines?

E-E-A-T is a quality framework used by human evaluators when assessing web content. ALDRIFT is a technical training method for AI models. E-E-A-T describes what Google wants to reward; ALDRIFT is about teaching models to privilege factual accuracy while generating answers. They’re related, but they operate at different layers.

Will ALDRIFT affect my website's rankings?

Not directly or immediately. The signal, though, is that Google is investing in AI systems that reward factual accuracy over plausible phrasing. Content built on verifiable claims and authoritative sourcing is better positioned if and when those systems influence how generative answers choose and cite sources.

What is the 'plausibility problem' in AI?

It’s the tendency of large language models to produce text that sounds correct and confident while containing factual errors. Because LLMs predict likely word sequences instead of verifying truth, they can generate hallucinations that are hard for users to spot.

How can I make my content more 'ALDRIFT-friendly' for SEO?

Anchor claims in named sources, link citations to the exact supporting evidence, add structured data for machine readability, run regular accuracy audits, and make expertise signals explicit (for example, detailed author bios). Those practices line up with the direction ALDRIFT represents and strengthen your content for AI Search Visibility Optimization.